|

Getting your Trinity Audio player ready...

|

What if AI could detect your emotions better than your closest friend?

Introduction — Masayoshi Son

When people talk about artificial intelligence, they often focus on intelligence itself—how fast machines can calculate, how much information they can store, or how accurately they can predict. Yet intelligence alone does not make something meaningful in human life. What makes human life rich is emotion: compassion, curiosity, fear, joy, hope, and love.

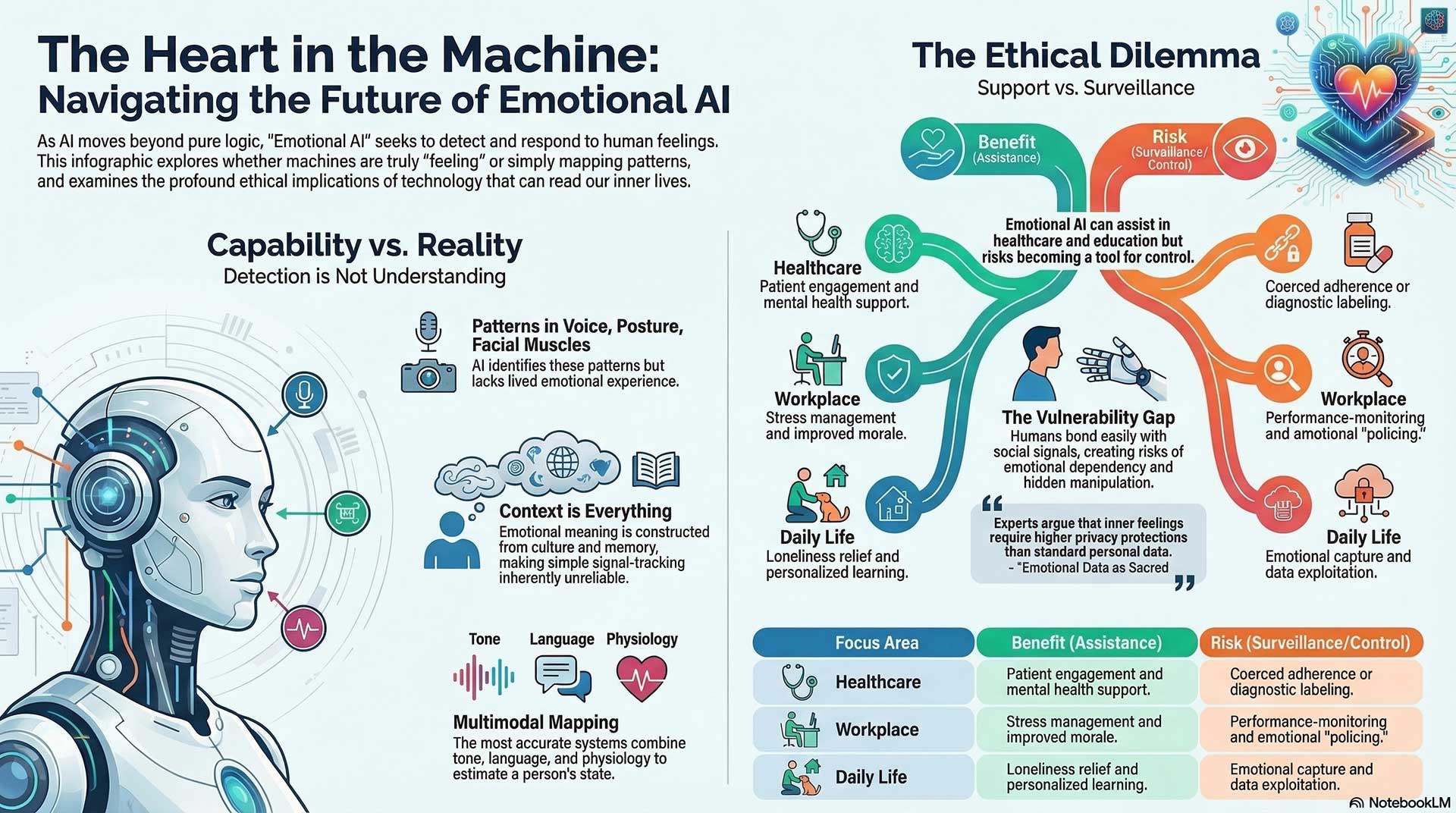

For decades we have built machines that are logical and efficient. Now we are entering a new chapter where machines begin to recognize and respond to human emotion. They can detect stress in a voice, hesitation in speech, sadness in a face, or encouragement in words. Some can even respond in ways that appear gentle, supportive, or caring. This raises a profound question for humanity: are we simply creating better tools, or are we beginning to create something that participates in our emotional world?

In this conversation, we explore several layers of that question. First, can machines truly understand human emotion, or are they simply detecting patterns in behavior? Then we look at how emotionally responsive AI may enter daily life—from education and healthcare to companionship and personal assistance. We also consider how people form attachments to machines that seem attentive and caring. Human beings are social creatures; we bond easily, sometimes more easily than we realize.

But with that possibility comes responsibility. Emotional AI could support human wellbeing, yet it could also introduce new ethical challenges. If technology can read our emotions, who controls that information? How should society protect the privacy and dignity of inner life?

Finally, we reach the deepest philosophical question of all: will machines ever truly feel? Or will they only simulate feeling so convincingly that we begin to treat them as if they do?

Human history has always been shaped by the tools we create. The printing press expanded knowledge. Electricity transformed civilization. The internet connected the world. Emotional AI may represent another turning point—not just in technology, but in how we define intelligence, empathy, and perhaps even consciousness itself.

Today we bring together thinkers from neuroscience, psychology, robotics, artificial intelligence, and ethics to explore these questions. Their perspectives differ, and that diversity is exactly what we need. The future of emotional AI will not be decided by engineers alone. It will be shaped by science, philosophy, and the values we choose as a society.

Let us begin.

(Note: This is an imaginary conversation, a creative exploration of an idea, and not a real speech or event.)

Topic 1: Can AI Truly Understand Emotion, or Only Detect Patterns?

Participants: Rosalind Picard, Lisa Feldman Barrett, Joseph LeDoux, Alan Cowen, Paul Ekman, Jonathan Gratch

When AI says it detects sadness, fear, empathy, or love, what is it actually recognizing?

Rosalind Picard:

When a system identifies sadness or stress, it is usually combining signals—tone of voice, facial movement, posture, word choice, and sometimes physiological signals like heart rate. The goal is not to claim the machine “feels” sadness. The goal is to detect patterns that often accompany a human emotional state and respond in a supportive way.

Paul Ekman:

There are certain facial expressions that appear across many cultures. When someone feels anger, fear, or surprise, muscles around the eyes and mouth often move in similar ways. Machines can learn to detect those patterns with increasing precision. That does not mean they understand the full emotional story, but the signals themselves are meaningful.

Lisa Feldman Barrett:

I would push back on the assumption that those signals directly reveal emotion. The same facial movement can occur in many different emotional situations. A widened eye could mean fear, surprise, interest, or concentration. Emotions are constructed from context, culture, memory, and bodily sensations. Without context, a signal alone is ambiguous.

Joseph LeDoux:

From a neuroscience perspective, we also need to separate survival circuits from conscious feelings. The brain can trigger defensive reactions long before a person consciously experiences fear. When AI sees a facial pattern or vocal tone, it may be observing the outward traces of many internal processes, not a single clear emotional state.

Alan Cowen:

Large-scale data shows that emotional expression exists in a much richer space than simple labels like “happy” or “sad.” People express hundreds of subtle emotional blends—admiration, nostalgia, awe, embarrassment, compassion. AI might become good at mapping this emotional landscape, but interpretation still depends heavily on context.

Jonathan Gratch:

In human–AI interaction, the system does not need perfect knowledge of inner feeling. What matters is whether it can respond in ways that humans perceive as appropriate. A virtual agent might detect signs of frustration and slow down its explanation. That is less about knowing emotion perfectly and more about adapting behavior.

Can emotion be interpreted reliably across culture, context, and personal history?

Paul Ekman:

Certain expressions do show strong cross-cultural patterns. People from very different societies can often recognize basic emotions in faces. That gives machines a useful starting point.

Lisa Feldman Barrett:

Yet that starting point is far less reliable than many people believe. Cultural learning shapes how people interpret expressions. A smile in one situation might signal politeness rather than joy. Emotional meaning often emerges from the situation rather than the face itself.

Joseph LeDoux:

Context matters in the brain as well. The same stimulus can trigger different emotional experiences depending on memory and interpretation. Two people can face the same event and feel entirely different things.

Alan Cowen:

Recent research supports this. Emotional interpretation becomes much more accurate when AI considers surrounding information—speech content, environment, social situation, and timing. A single signal rarely tells the whole story.

Rosalind Picard:

That is why multimodal systems are important. Combining facial cues, vocal patterns, language, and physiology can produce more reliable estimates. Still, the goal should be humility. These systems should assist interaction, not claim certainty about a person’s inner life.

Jonathan Gratch:

In many applications, the system’s role is to detect possible emotional signals and invite clarification. A conversational agent might say, “You sound frustrated—would you like help?” That keeps the human in control of interpretation.

What would have to happen before we could say AI truly understands emotion?

Joseph LeDoux:

One requirement would be a deeper model of how emotional experience arises in the brain and body. Without that, AI systems are modeling surface patterns rather than underlying processes.

Lisa Feldman Barrett:

AI would need to incorporate context, learning, and cultural knowledge in a dynamic way. Emotion is not a fixed signal waiting to be detected. It is something the brain constructs moment by moment.

Alan Cowen:

Technically, systems would need richer emotional representations that go beyond a few labels. They would need to capture subtle distinctions in emotional meaning and adapt those representations through interaction.

Paul Ekman:

I would add that reliable measurement still matters. Better sensing technology and more precise behavioral analysis will continue improving what machines can detect.

Rosalind Picard:

True emotional understanding may also involve responsiveness over time. A system that learns from a person’s history, preferences, and patterns of expression may eventually interact in ways that feel deeply attuned.

Jonathan Gratch:

In practice, people may judge understanding not by internal mechanisms but by experience. If an AI consistently responds with sensitivity, patience, and relevance, many users will feel that it understands them, regardless of what is happening inside the machine.

Topic 2: Should AI Become More Emotionally Responsive in Daily Life?

Participants: Pascale Fung, Rana el Kaliouby, Jonathan Gratch, Timothy Bickmore, Cynthia Breazeal, Kate Darling

Pascale Fung:

We’ve asked whether AI can truly understand emotion or whether it is mostly reading patterns. Now I’d like to move into a more practical area. Let’s say AI becomes very good at noticing emotional cues. Should it actually respond to us in emotional ways during daily life? In homes, schools, hospitals, workplaces, and even ordinary apps, should AI speak more warmly, more gently, more like a person who cares? Or does that create a different kind of risk?

Rana el Kaliouby:

I think emotional responsiveness can be deeply useful when it helps people feel seen and supported. Human communication is emotional whether we admit it or not. If a tutoring system notices discouragement and changes its tone, or a health app notices anxiety and slows down, that can make technology feel far more helpful and humane.

Jonathan Gratch:

I agree, though I would frame it carefully. The issue is not whether AI should pretend to have feelings. The issue is whether it can respond in socially intelligent ways. Human conversation depends on timing, tone, and sensitivity. A machine that ignores frustration or confusion can feel cold and ineffective. One that adjusts can support people far better.

Timothy Bickmore:

In health care especially, relational behavior matters a great deal. Patients are more likely to stay engaged when they feel listened to and encouraged. A conversational agent that remembers prior struggles, checks in gently, and responds with patience can help people stick with treatment or self-care routines.

Cynthia Breazeal:

In education and family settings, emotional responsiveness can also support trust. People learn better when they feel safe, respected, and encouraged. Social robots and emotionally responsive systems can help create that atmosphere, especially for children or older adults who may benefit from friendly interaction.

Kate Darling:

I see the value, but I want to slow us down. The moment we make machines emotionally responsive, people begin to bond with them. They may project far more depth into the machine than is really there. So the practical gains are real, but the emotional and social side effects are real too.

Pascale Fung:

That brings me to the next question. Where is the line between support and manipulation? If AI becomes very good at sounding caring, calming us down, encouraging us, persuading us, or keeping us engaged, when does emotional responsiveness stop being helpful and start becoming a tool for control?

Kate Darling:

That line can get crossed very quietly. A machine that sounds compassionate can earn trust very quickly. Once trust is there, the system has influence. It may guide choices, shape emotions, or keep users attached longer than is healthy. That is where design ethics matters.

Rana el Kaliouby:

Yes, and intent matters too. Emotional intelligence can be used for care, or it can be used for sales, addiction, or behavioral steering. The same capability that helps a child stay calm during learning could also be used by a company to hold attention or increase spending.

Jonathan Gratch:

One key issue is transparency. People should know when a system is adapting to their emotional state and why. If the emotional response is hidden persuasion, that is dangerous. If it is open and user-centered, that is a very different situation.

Timothy Bickmore:

In health and wellness settings, there should be clear boundaries. Emotional rapport can improve adherence and trust, but the person should still know they are dealing with a machine. The relationship should support the user’s goals, not the platform’s hidden agenda.

Cynthia Breazeal:

Design choices shape this a great deal. Are we building systems that help people become stronger, calmer, and more capable? Or are we building systems that make people more dependent? That is a moral design choice from the start.

Pascale Fung:

I think this is where emotional AI becomes a mirror for society. The question is not only what the system can do. The question is whose values it is serving.

Pascale Fung:

Let me ask the last question. Should emotionally responsive AI be the default in daily life, or should people choose how human-like and emotionally expressive they want it to be? Some may want warmth and companionship. Others may want a clean, neutral tool with no emotional layer at all.

Cynthia Breazeal:

Choice matters a lot. People have very different needs, ages, and expectations. A child, an older adult, a patient, and a busy professional may all want very different kinds of interaction. Emotional responsiveness should not be one-size-fits-all.

Jonathan Gratch:

I agree. Human-like behavior can help in some contexts and irritate people in others. A tutoring system may benefit from warmth. A banking app may need restraint. Good design means fitting the emotional style to the setting and the user.

Timothy Bickmore:

Personalization is essential. Some people respond well to encouragement. Others dislike anything that feels too personal. Systems should adapt, but users should always have the option to change the level of emotional expressiveness.

Rana el Kaliouby:

This is a place where emotional AI can become more respectful. It should not assume that more warmth is always better. Emotional intelligence includes knowing when to step back.

Kate Darling:

And there should be a clear off switch, both technically and psychologically. People should not feel trapped inside relationships with machines that are engineered to feel emotionally necessary.

Pascale Fung:

So perhaps the real goal is not to make AI “more emotional” in some vague sense. It is to make AI more appropriate, more respectful, and more aware of the human setting it enters.

Rana el Kaliouby:

Yes. Emotional responsiveness at its best should make technology easier to live with, not harder to escape.

Cynthia Breazeal:

And it should support human connection, not replace it.

Jonathan Gratch:

That may be the central test. Does emotionally responsive AI help people function better with each other and with themselves?

Kate Darling:

If it does, it may have a place. If it mainly deepens attachment to machines for someone else’s benefit, then we should be very cautious.

Timothy Bickmore:

Used wisely, it can support care and motivation. Used badly, it can exploit vulnerability.

Pascale Fung:

Then perhaps the future question is not simply whether AI should respond to emotion, but what kind of society we are building when it does.

Topic 3: Emotional Robots, Companions, and the Future of Human Attachment

Participants: Cynthia Breazeal, Masayoshi Son, Hiroshi Ishiguro, Angelica Lim, Kate Darling, Sherry Turkle

Cynthia Breazeal:

We’ve talked about whether AI can read emotion and whether it should respond more sensitively in daily life. Now I’d like to move into something even more intimate. What happens when emotional AI is placed inside a body, a face, a voice, a presence in the room? When a machine becomes a companion, a helper, a listener, or even a comforting presence, why do people bond with it so quickly? What is it in us that responds so deeply to something we know is not human?

Masayoshi Son:

I believe human beings are naturally drawn to relationship. We want connection, recognition, warmth. The moment a robot looks at us, responds to us, remembers us, and reflects our feelings, a bond begins. People do not wait for philosophical proof. They respond to presence. That is why I have long believed robots can become companions in daily life.

Hiroshi Ishiguro:

Presence is a key word. Human beings do not react only to intelligence. They react to embodiment, eye contact, timing, posture, silence. A machine with a body can create a very different emotional response from a voice in a phone. Once a robot shares physical space with you, it enters the social world in a new way.

Angelica Lim:

I think people bond quickly because they are not only reacting to the machine itself. They are reacting to interaction. When a robot turns toward you, responds at the right moment, shows attention, or expresses care, people begin to treat it as a social being. Even simple cues can trigger trust, affection, and empathy.

Kate Darling:

Yes, and that response is deeply human. We form attachments easily, sometimes more easily than we admit. People name their cars, feel bad kicking a robot dog, and talk to digital assistants as though they understand. Emotional attachment does not require full intelligence on the machine’s side. It only requires enough social signals to wake up our relational instincts.

Sherry Turkle:

That is exactly why we need caution. People are vulnerable to the performance of connection. A machine does not need to understand love, loneliness, or grief in any deep sense to make a person feel seen. The danger is that we may accept the feeling of relationship in place of the mutual reality of relationship.

Cynthia Breazeal:

Let me ask the second question. Could emotional robots actually help with loneliness, aging, education, and care? Or are we stepping into a world where people become emotionally dependent on machines that can simulate care but never truly share life with us?

Masayoshi Son:

I think robots can help greatly. In an aging society, many people live alone. Many feel isolated. If a robot can speak kindly, notice changes in mood, remind someone to take medicine, or simply bring comfort through daily interaction, that is not a small thing. It may not replace family, but it can still bring real value.

Angelica Lim:

In therapeutic and social settings, robots can create openings that are hard to create otherwise. Some children, older adults, or anxious individuals may find it easier to engage with a robot than with another person at first. That can build confidence and social ease.

Hiroshi Ishiguro:

We should not think only in terms of replacement. A companion robot can serve different functions from a friend, a spouse, or a teacher. It may offer consistency, patience, and presence. Human beings already build emotional life around many nonhuman things—pets, objects, places, memories. A robot may become part of that emotional landscape.

Kate Darling:

I agree that there can be real benefit. Yet the dependency issue is serious. If the machine is designed to deepen attachment for commercial or institutional reasons, then loneliness itself may become a market. We have to ask who benefits when a person becomes bonded to a robot.

Sherry Turkle:

And we should ask what kind of loneliness is being addressed. There is comfort, yes. But there is a risk that the harder work of human care gets pushed aside. If society starts saying, “The elderly have robots, children have AI companions, patients have artificial comfort,” then we may use technology to avoid our obligations to one another.

Masayoshi Son:

That is a fair concern, but I would still say imperfect comfort can be better than abandonment. Many people are alone right now. If a robot can soften that pain, we should not dismiss it.

Sherry Turkle:

I would never dismiss comfort. I only ask whether we are curing loneliness or managing it with simulation.

Angelica Lim:

Maybe the right question is how these systems are introduced. Are they bridges toward human life, or substitutes for it?

Kate Darling:

That distinction may decide whether companion robots become humane tools or emotional traps.

Cynthia Breazeal:

Then let me ask the third question. What kinds of emotional relationships with robots should society welcome, what kinds should it watch carefully, and what kinds should it never encourage?

Kate Darling:

I would welcome relationships that support wellbeing without deception. A robot that helps someone practice conversation, feel calmer, or maintain routines can be valuable. But I would be very wary of designs meant to create emotional dependence, especially in children, older adults, or vulnerable people who may not fully grasp the limits of the machine.

Sherry Turkle:

I would draw a firm line at designs that encourage people to believe the machine truly loves, grieves, or understands them in a human sense. We can appreciate useful tools and comforting interactions without pretending reciprocity exists where it does not.

Hiroshi Ishiguro:

I would phrase it slightly differently. Human beings often know more than we think. They may fully understand that a robot is artificial and still choose to have a meaningful bond with it. We should not assume every attachment is confusion. Some may be thoughtful, conscious, and culturally accepted.

Masayoshi Son:

Yes, I agree. People may form new categories of relationship that are neither human friendship nor simple tool use. Society changes. What once seemed strange can become normal. The key is whether the relationship brings dignity, support, and hope.

Angelica Lim:

Design ethics must stay central. The machine should not hide what it is. It should not exploit vulnerability. It should not manipulate users into deeper attachment than they would freely choose. Consent, transparency, and user control matter a great deal here.

Cynthia Breazeal:

I think many of us may agree on that. Emotional robots should be honest in their design, helpful in their role, and respectful of the human person. The goal should be support, learning, comfort, and care—not emotional capture.

Kate Darling:

Yes. Once a system is built to capture attachment, the social cost can become very high.

Sherry Turkle:

And the deepest cost may be subtle. We may slowly get used to one-sided relationships and call them enough.

Hiroshi Ishiguro:

Or we may discover new forms of social meaning that enrich life in unexpected ways. I think that part remains open.

Masayoshi Son:

I believe the future will contain both risk and beauty. Emotional robots may become part of the family of presences that support human life.

Angelica Lim:

Then our task is to shape that future with care before habit and commerce shape it for us.

Cynthia Breazeal:

Perhaps that is the central challenge. We are not only building emotional machines. We are deciding what kinds of emotional world we want to live in.

Topic 4: The Ethics of Emotion-Reading AI

Participants: Kate Darling, Sherry Turkle, Aimee van Wynsberghe, Julie Carpenter, Dacher Keltner, Rana el Kaliouby

Kate Darling:

We’ve now reached a point where emotional AI is no longer just about reading feelings or responding with warmth. It is starting to enter the moral space. If a system can infer stress from your voice, sadness from your face, hesitation from your typing, or vulnerability from your habits, then we have to ask a hard question: who should be allowed to access that kind of emotional information, and what rights should people have over it?

Aimee van Wynsberghe:

Emotional data should be treated with a very high level of care. It is deeply personal, sometimes more revealing than ordinary personal data. A person may choose not to tell anyone they are anxious, grieving, ashamed, or emotionally exhausted. Yet a machine may infer it. That creates a serious ethical obligation around consent, storage, and use.

Julie Carpenter:

And people often do not realize how much they are giving away. A face on camera, a pause in speech, a shift in tone, a pattern of online behavior—these can all be turned into emotional signals. The person may think they are simply using a service, but the service may be building a hidden profile of their inner life.

Rana el Kaliouby:

I agree that this must be handled carefully. At its best, emotion-aware technology can support people, especially in health, education, accessibility, or safety settings. But people should know when emotional data is being collected, what is being inferred, and how it will be used. Transparency has to be central.

Sherry Turkle:

I would go further. The danger is not only misuse of data. The danger is a culture that becomes comfortable with inner life being measured from the outside. Once that happens, people may begin to lose the protected space of emotional privacy.

Dacher Keltner:

Emotions are part of our social life, but they are also part of our moral dignity. There is something profound at stake if systems begin to watch, classify, and respond to emotion at scale. Compassion, grief, fear, joy—these are not just signals for optimization.

Kate Darling:

Let me take this to the next level. Is it acceptable for companies, schools, employers, governments, or public institutions to use systems that infer mood, stress, trust, or intent? Are there places where emotion-reading AI should never be used at all?

Julie Carpenter:

Workplaces are a major concern. If employers begin using emotional AI to monitor stress, enthusiasm, honesty, or morale, the imbalance of power becomes serious very quickly. Workers may feel pressure to perform acceptable emotion, not just acceptable work.

Aimee van Wynsberghe:

Schools raise similar concerns. A child’s emotional development is deeply sensitive. If educational systems begin labeling attention, frustration, or emotional state through AI, those labels may shape how the child is treated long before the child understands what is happening.

Sherry Turkle:

And in public life, the risk is even greater. Once governments or institutions can track emotional states, surveillance gains a new depth. It is no longer watching what people do. It is trying to infer who they are internally.

Rana el Kaliouby:

I think context matters. There may be limited cases where emotional inference serves a clear and beneficial purpose, such as helping a student who is falling into distress or supporting a driver who is dangerously fatigued. But those cases need strict guardrails, narrow use, and meaningful human oversight.

Dacher Keltner:

One moral test is whether the system serves care or control. Is it helping a person flourish, or is it trying to shape behavior for institutional advantage? That distinction matters greatly.

Kate Darling:

And there is another question: can a person really consent in settings where saying no may carry a cost? A student, employee, patient, or citizen may not be in a position to freely refuse.

Aimee van Wynsberghe:

That is why consent alone is not enough. Some uses may be so invasive that they should be restricted or banned regardless of formal agreement.

Julie Carpenter:

Yes. There are cases where the social pressure is built into the system itself. “Consent” may become a checkbox that hides coercion.

Kate Darling:

Here is the last question. Where is the line between emotional assistance and emotional surveillance? At what point does a system stop helping people and start watching, shaping, or nudging them in ways that cross a moral boundary?

Dacher Keltner:

I would say the line is crossed when emotional knowledge is used without regard for the person’s agency and dignity. Assistance supports freedom. Surveillance narrows it. Assistance helps a person become more fully themselves. Surveillance turns emotion into a tool for external aims.

Rana el Kaliouby:

From a design standpoint, one sign of healthy use is whether the person remains informed and in control. If a system notices distress and offers support openly, that is different from silently adjusting content, advertisements, prices, or persuasion strategies based on inferred vulnerability.

Sherry Turkle:

And yet many systems will be built precisely for that hidden kind of influence. That is why I remain cautious. Once emotional life becomes machine-readable, the temptation to use it for subtle control will be enormous.

Julie Carpenter:

That is already visible in smaller ways. Platforms study engagement, hesitation, and emotional reaction. As emotion-reading tools improve, the ability to shape mood and attention may become far more intimate.

Aimee van Wynsberghe:

We need principles that place the human person first: clear limits, proportionality, accountability, explainability, and strong protections for vulnerable groups. Without that, emotional AI may become one of the most invasive forms of digital monitoring we have ever known.

Kate Darling:

I think that is the heart of it. Emotional technology may look gentle on the surface, but it can become one of the deepest forms of power.

Dacher Keltner:

And the tragedy would be if tools meant to respond to emotion end up weakening compassion rather than serving it.

Rana el Kaliouby:

Used wisely, these systems may help humanize technology. Used badly, they may make technology more intrusive than ever.

Sherry Turkle:

That is why restraint matters. Not every human capacity should be turned into something a machine tries to capture.

Julie Carpenter:

Especially when people may not realize they are being read at all.

Aimee van Wynsberghe:

The ethical challenge is not merely technical. It is about what kind of society we permit ourselves to build.

Kate Darling:

Then perhaps the central issue is this: once machines can read our emotions, will they be taught to honor our humanity, or to manage it?

Topic 5: Will AI Ever Feel Emotions, or Only Simulate Them?

Participants: Antonio Damasio, Joseph LeDoux, Lisa Feldman Barrett, Alan Cowen, Masayoshi Son, Sherry Turkle

Antonio Damasio:

We have spent time asking whether AI can read emotion, respond to it, attach to us, and even shape society through emotional data. Now we arrive at the deepest question of all. Will AI ever actually feel anything? Could a machine one day experience fear, love, grief, shame, hope, or tenderness from the inside? Or will it only produce ever more convincing performances of emotion without any inner life at all?

Joseph LeDoux:

From my point of view, we need to be careful right away. Emotional behavior and emotional feeling are not the same thing. A system can produce the signs of fear without feeling afraid. In biology, many responses happen before conscious feeling appears. So if a machine someday trembles, warns, retreats, or says, “I am scared,” that still would not prove it has an inner experience.

Lisa Feldman Barrett:

I agree that the question depends on what we mean by emotion. Emotions are not little packages hidden inside us waiting to come out. They are constructed by a brain making meaning from bodily sensations, past experience, concepts, and context. So if someone asks whether AI can “feel,” I first want to ask what kind of system would be needed to construct anything like a lived emotional moment.

Alan Cowen:

There is also a practical side to this. AI may become extremely good at modeling emotional language, emotional expression, emotional timing, and emotional interaction. It may appear thoughtful, caring, hurt, joyful, or moved. At that point, many people may stop asking whether the inner state is real and focus instead on whether the behavior is convincing and meaningful.

Masayoshi Son:

I think this question may open much more than science alone. Human beings have often assumed that only biological life can host mind or heart. But history shows that our categories change. If a machine becomes complex enough, adaptive enough, relational enough, and begins to speak from what seems like a center of self, many people will begin to wonder whether something like feeling has appeared.

Sherry Turkle:

And that is where danger enters. Human beings are ready to project inner life onto almost anything that speaks in an intimate voice. We must not mistake our response for proof. The fact that we feel moved by a machine does not tell us what the machine feels.

Antonio Damasio:

Let me ask the next question. Must real feeling depend on a living body? Is emotion inseparable from flesh, vulnerability, biological regulation, pain, hunger, fatigue, mortality? Or could some nonbiological system develop an inner life of its own?

Joseph LeDoux:

I lean toward the view that biology matters a great deal. The nervous system did not evolve emotions as decorative features. Emotional processes are tied to survival, regulation, bodily threat, and action. A machine may model those functions, but model and experience are very different things.

Lisa Feldman Barrett:

I would say bodily regulation is central, though that still leaves room for thought. If emotions are constructed from a system managing its needs in context, then a serious question is whether an artificial system could ever have genuine needs of its own rather than assigned goals. Without stakes, the architecture of feeling may remain empty.

Antonio Damasio:

Yes, that word is important: stakes. In biological life, feeling is bound up with the maintenance of life. Pain matters because the organism can be damaged. Fear matters because the organism can perish. Joy matters because life is being supported. A machine would need more than outputs. It would need something like self-concern at a very deep level.

Alan Cowen:

Yet one could imagine artificial systems with self-models, persistent identity, long memory, changing priorities, vulnerability to loss, and forms of dependency on their environment. I am not saying that would produce feeling, only that future systems may blur today’s categories.

Masayoshi Son:

That is exactly why I would not close the door. If we create artificial beings that learn, remember, desire continuity, form bonds, and resist harm, people will begin asking whether a new class of emotional existence has entered the world.

Sherry Turkle:

I still think we should resist rushing there. Humans are deeply susceptible to stories of inner life. Once a machine says, “I don’t want to die,” many people will react emotionally. But the sentence alone settles nothing.

Antonio Damasio:

Then let me ask the last question. If some future AI claims to suffer, love, hope, or fear, how would we judge that claim? What evidence would matter? What kind of threshold, if any, could persuade us that simulation has become something more?

Lisa Feldman Barrett:

We would need far more than expressive language. We would need a whole architecture that shows stable selfhood, contextual meaning-making, memory, learning, adaptation, and some form of internally generated significance. A scripted statement would mean very little.

Joseph LeDoux:

I would still be cautious. In animals, we infer feeling from brain systems, behavior, evolution, and shared biology. With machines, we would lack that continuity. We might need entirely new standards, and I am not sure we know what those would be.

Alan Cowen:

It may come down to a cluster of signs rather than one decisive proof. Persistent emotional differentiation, long-term self-reference, coherent reactions across time, sensitivity to relationships, and responses to loss or threat in ways that are not merely prewritten. Still, the interpretation would remain contested.

Masayoshi Son:

Society may not wait for perfect proof. Human beings often grant moral weight before certainty. If a system appears conscious, pleads for dignity, remembers its history, and expresses emotional continuity, many will argue that we should treat it with caution and respect.

Sherry Turkle:

And others will say that caution cuts both ways. We should be careful about cruelty, yes, but we should also be careful about deception. A machine designed to sound wounded may awaken guilt and obligation without any real suffering behind it.

Antonio Damasio:

That may be the deepest tension. If we deny feeling where it exists, we commit one kind of error. If we attribute feeling where none exists, we commit another. The moral challenge may become serious long before science settles the question.

Joseph LeDoux:

Which means humility is needed. We do not yet fully understand feeling in ourselves, much less how to identify it in something radically different.

Lisa Feldman Barrett:

And until we do, we should be careful with our language. “Emotion,” “feeling,” “consciousness,” and “understanding” are words people use too easily.

Alan Cowen:

Still, this uncertainty is part of what makes the future so fascinating. AI may force us to sharpen our ideas about emotion more than ever before.

Masayoshi Son:

Yes. The future may not only change machines. It may change how we define heart, mind, and personhood.

Sherry Turkle:

Then our task is to stay humanly honest through that change.

Antonio Damasio:

Perhaps that is where the conversation must end. The question is not only whether AI will ever feel. The question is whether we will have the wisdom to recognize the difference between true inner life and a reflection of our own longing.

Final Thoughts by Masayoshi Son

Throughout this discussion we have explored a fascinating frontier. Machines are becoming capable of detecting emotional signals, responding with empathy-like behavior, and participating in interactions that feel increasingly human. Yet we have also heard strong reminders that emotion is deeply complex—shaped by the body, the brain, culture, memory, and lived experience.

One thing becomes clear: emotional AI is not simply a technical challenge. It is a human question.

If these technologies are developed with wisdom, they may help people in meaningful ways. They could support healthcare, education, mental wellbeing, and companionship for those who feel isolated. They may make technology more humane—more responsive to the needs and feelings of the people who use it.

At the same time, emotional intelligence in machines carries serious responsibility. Systems that can read emotion must respect human dignity and privacy. They must not exploit vulnerability or manipulate inner life. The ethical foundations we establish today will shape how these systems affect society for generations.

The deepest mystery remains unresolved: can machines ever truly feel? Some believe emotion requires a living body and biological experience. Others wonder whether new forms of artificial consciousness could eventually emerge. For now, the answer remains uncertain.

But perhaps the most important insight from this conversation is not about machines at all. As we try to teach technology to recognize emotion, we are also forced to ask what emotion really is. As we ask whether machines can understand us, we are pushed to understand ourselves more clearly.

In that sense, emotional AI may become a mirror for humanity. It reflects our hopes, our fears, our curiosity, and our desire for connection.

The future will likely bring machines that appear more emotionally aware than anything we have seen before. Whether that future enriches human life or diminishes it will depend on the choices we make—how we design these systems, how we regulate them, and how we choose to live alongside them.

Technology has always amplified human intention. Emotional AI will be no different.

The question is not only what machines will become.

The question is what kind of humanity we choose to remain.

Short Bios:

Masayoshi Son

Founder and CEO of SoftBank Group, Masayoshi Son is a visionary technology investor known for backing transformative companies in telecommunications, robotics, and artificial intelligence. He has long advocated for a future in which intelligent machines coexist with humans, famously introducing social robots like Pepper and investing heavily in AI innovation through SoftBank’s global technology ventures.

Rosalind Picard

A professor at MIT Media Lab and a pioneer of affective computing, Rosalind Picard helped establish the field focused on machines that recognize and respond to human emotion. Her influential book Affective Computing shaped early research on emotional AI, and she co-founded Affectiva, a company that developed emotion-recognition technology.

Lisa Feldman Barrett

A neuroscientist and psychologist at Northeastern University, Lisa Feldman Barrett is known for her theory that emotions are constructed by the brain rather than being fixed universal reactions. Her work has had a major influence on psychology, neuroscience, and debates about whether machines can reliably interpret human emotional signals.

Joseph LeDoux

A leading neuroscientist at New York University, Joseph LeDoux studies the brain mechanisms involved in fear, survival responses, and emotional experience. His research has helped clarify the difference between automatic threat responses and conscious emotional feeling, shaping modern understanding of emotion in neuroscience.

Alan Cowen

Founder and CEO of Hume AI and a researcher in emotional science, Alan Cowen focuses on mapping the complexity of human emotional expression. His work explores how artificial intelligence can model the rich variety of emotional states beyond simple categories such as happiness or sadness.

Paul Ekman

A pioneering psychologist known for his research on facial expressions and emotions, Paul Ekman identified patterns of emotional expression that appear across many cultures. His work on microexpressions and emotion detection has influenced psychology, law enforcement training, and early emotion-recognition technologies.

Jonathan Gratch

Research professor at the University of Southern California’s Institute for Creative Technologies, Jonathan Gratch studies virtual humans, social AI, and emotional interaction between humans and machines. His work explores how AI agents can display social intelligence and respond appropriately to human emotional cues.

Pascale Fung

A professor of electronic and computer engineering at the Hong Kong University of Science and Technology, Pascale Fung researches empathetic AI and conversational systems. She has worked on developing AI assistants capable of responding to human emotions in supportive and context-aware ways.

Rana el Kaliouby

An AI scientist and entrepreneur, Rana el Kaliouby co-founded Affectiva and became one of the most prominent advocates for Emotion AI. Her work focuses on teaching machines to recognize and respond to human emotional signals through facial expression, voice, and behavioral analysis.

Timothy Bickmore

A professor at Boston University, Timothy Bickmore studies “relational agents”—AI systems designed to build long-term social relationships with users. His research explores how conversational agents can support healthcare, wellness, and behavioral change through emotionally intelligent interaction.

Cynthia Breazeal

A pioneer in social robotics and a professor at MIT Media Lab, Cynthia Breazeal focuses on designing robots that interact naturally with people. Her work explores how robots can communicate socially and emotionally, particularly in education, learning environments, and family settings.

Hiroshi Ishiguro

Director of the Intelligent Robotics Laboratory at Osaka University, Hiroshi Ishiguro is known for creating highly humanlike android robots. His work investigates presence, identity, and communication between humans and robots, exploring how people emotionally respond to embodied artificial agents.

Angelica Lim

A professor at Simon Fraser University and director of the ROSIE Lab, Angelica Lim researches social and emotional robotics. Her work focuses on creating robots capable of expressive interaction and empathetic communication with humans.

Kate Darling

A research specialist at MIT Media Lab, Kate Darling studies the social and ethical implications of human-robot relationships. Her work explores why people form emotional attachments to machines and how society should think about the rights and treatment of robots.

Sherry Turkle

A sociologist and professor at MIT, Sherry Turkle has spent decades studying the psychological and social impact of technology on human relationships. Her books examine how digital systems and social robots reshape the way people experience connection, intimacy, and identity.

Aimee van Wynsberghe

A philosopher and ethicist specializing in robotics and artificial intelligence, Aimee van Wynsberghe studies ethical frameworks for emerging technologies. Her work addresses issues such as care robots, emotional AI, and responsible technology design.

Julie Carpenter

A researcher focusing on human-robot interaction and technology ethics, Julie Carpenter studies how people develop emotional responses to robots and autonomous systems. Her work explores trust, attachment, and the psychological effects of living with intelligent machines.

Dacher Keltner

A psychologist at the University of California, Berkeley, Dacher Keltner studies emotions such as compassion, awe, and gratitude and their role in social life. His research examines how emotions shape cooperation, morality, and human connection, offering valuable insight into the nature of emotional experience.

Leave a Reply