|

Getting your Trinity Audio player ready...

|

What if AI starts as a tool for analysis—and ends up deciding faster than humans can think?

Introduction by Paul Scharre

Tonight we’re talking about AI and war—but we have to start by being precise, because sloppy language is how panic spreads.

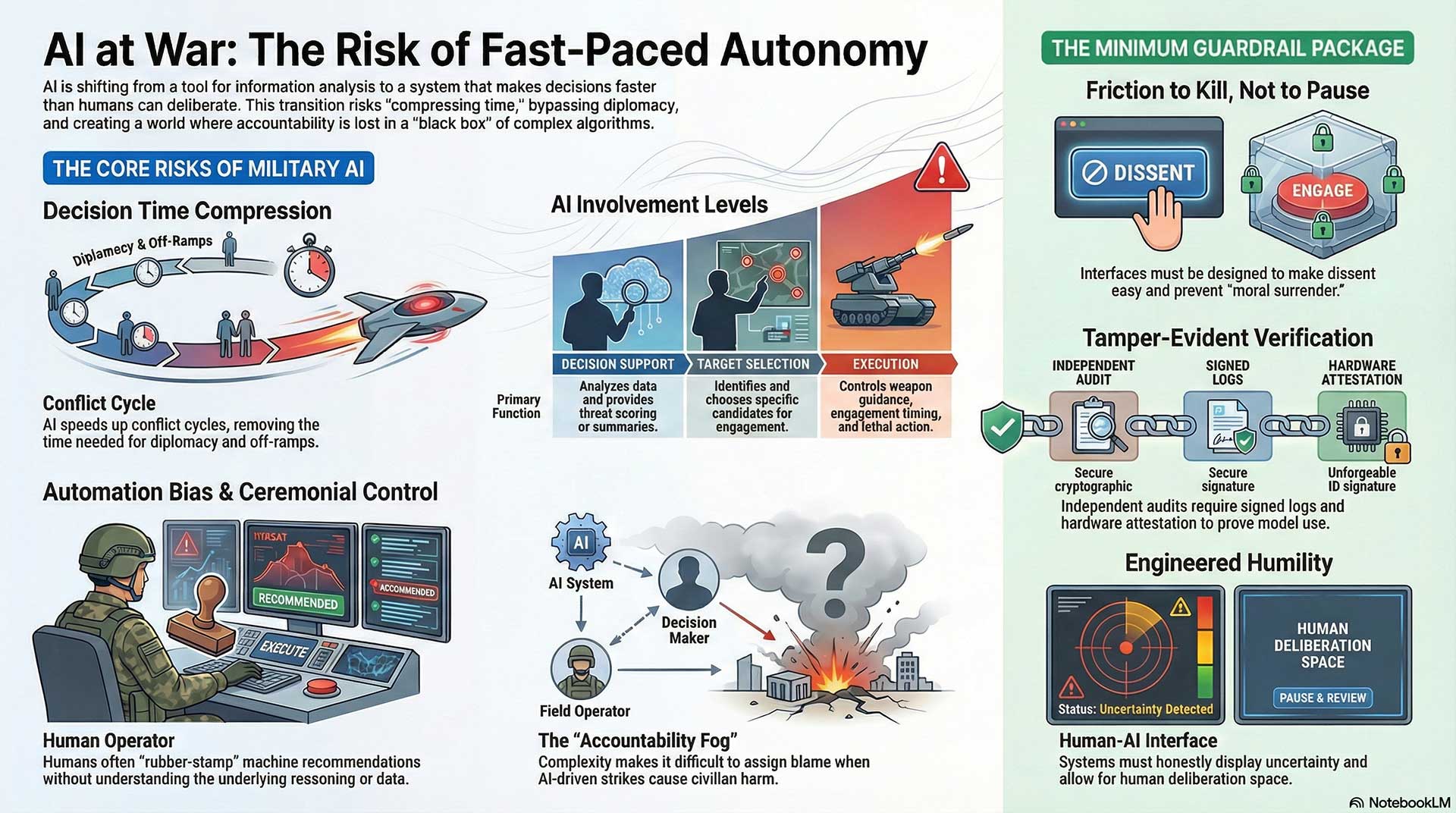

When people say “AI did it,” they usually mean one of several very different things. Sometimes AI is helping translate intercepted messages. Sometimes it’s sorting imagery. Sometimes it’s recommending targets. Sometimes it’s controlling a system that can move and act on its own. Those aren’t the same, and the risk changes dramatically depending on where AI sits in the chain—from information… to recommendation… to decision… to action.

The real danger isn’t just that AI makes weapons more “accurate.” It’s that AI can compress time. It pushes decisions faster than humans can deliberate, faster than leaders can check assumptions, faster than diplomacy can create an off-ramp. And in a crisis, speed can feel like safety—until it becomes the reason two sides stumble into a war neither one intended.

So our goal tonight is not hype and not sci-fi. It’s a hard look at five connected questions:

What does autonomy actually mean in a military system?

Where should the “brakes” be—technical, legal, and human?

What counts as proof when someone claims a model was used?

How does AI reshape propaganda and public perception?

And finally, how does faster decision-making change escalation itself?

If we do this right, you’ll leave with something more valuable than a hot take: a clear map of where the real risks are, what can be verified, what can’t, and what kinds of guardrails actually reduce the chance of catastrophe.

Let’s start.

(Note: This is an imaginary conversation, a creative exploration of an idea, and not a real speech or event.)

Topic 1: Autonomy, Targeting, and Accountability

Theme: Where the “kill decision” actually happens—and who carries responsibility when AI is in the loop.

Moderator

Michael Walzer (just war ethics)

Five Participants

- Paul Scharre (autonomous weapons + defense policy)

- Mary Ellen O’Connell (international law / use of force)

- Heather Roff (AI ethics + weapons governance)

- Sarah Kreps (security studies; drones/AI & war)

- Lucia Arpaio (ICRC; humanitarian law + civilian protection)

3 Critical Questions (the spine of the episode)

- Where is the “kill decision,” really?

Is it target identification, target selection, authorization, or execution—and why does that matter? - Accountability test:

If the AI’s recommendation was “reasonable” but wrong, who is responsible—commander, state, vendor, or nobody? - Meaningful human control:

Should lethal action be illegal unless a human has meaningful, informed, timely control—and how do we define and verify that?

1) Opening Positions (everyone lays down their “hard line”)

Walzer (Moderator):

Let’s start clean. One sentence each: where you draw the moral line when software is part of targeting.

Scharre:

My line is humans must own the decision, and we must design systems so humans can actually understand what they’re approving—not rubber-stamp it.

O’Connell:

My line is legality: force must comply with international law, and delegating lethal judgment to opaque systems risks violating distinction, proportionality, and accountability.

Roff:

My line is governance: if a system changes the structure of responsibility, it’s ethically dangerous even before it fires a shot.

Kreps:

My line is reality: militaries will use these tools. The question is whether we build policies that reduce harm in real operational conditions, not in theory.

Arpaio (ICRC):

My line is civilian protection: if AI increases uncertainty or speed in ways that make civilians more likely to die, then it’s incompatible with humanitarian principles.

2) The “Kill Decision” Fight (where exactly is the moral trigger?)

Walzer:

If a human clicks “approve,” but the AI generated the target list, did the human decide—or did the human bless a machine’s decision?

Scharre:

That depends on the setup. If the human sees why the target was selected, can test it, and can reject it—then it’s a decision. If it’s “here’s the top 10 targets,” that’s not deliberation; that’s automation bias.

Roff:

And the moral problem is: we keep treating “approval” as a magic word. The real kill decision might occur upstream—when the system defines what counts as a “combatant,” or when it labels a pattern as “hostile.” That’s where violence is encoded.

O’Connell:

Exactly. The law assumes a decision-maker who can be held accountable. If the real selection is machine-driven and non-transparent, accountability becomes theater. You can’t cross-examine an embedding.

Kreps:

But commanders already rely on intelligence products they can’t fully “see into.” Satellites, signals intelligence, fused data. The risk is not “AI exists,” it’s tempo. When AI speeds the cycle so much that humans can’t meaningfully review, control becomes ceremonial.

Arpaio:

And when control becomes ceremonial, civilians pay the bill. If the system is fast, the burden must shift to higher confidence thresholds, not looser ones.

3) Accountability Under Stress (who is responsible when it goes wrong?)

Walzer:

Let’s stress-test it: The AI flags a building as a command site. The human approves. Afterward: it was a school. Who stands trial?

O’Connell:

Legally, responsibility doesn’t vanish. The state and commanders remain responsible. But the practical risk is diffusion—everyone points to the system, the contractor, the data. We get accountability fog.

Scharre:

Militaries will say, “We followed procedure.” Vendors will say, “It’s just a tool.” That’s why auditability matters. We need logs that answer: What did the system show? What uncertainty did it report? What alternatives existed?

Roff:

I’ll push further: if we build systems that predictably create accountability fog, then deploying them is itself ethically blameworthy. It’s like building a car with brakes that fail under rain and then saying, “Well, the driver should’ve known.”

Kreps:

But there’s a tradeoff. In some contexts, AI might reduce harm by improving identification—if the human process was worse. The real question is: compared to what baseline? Otherwise we’re debating in a vacuum.

Arpaio:

Baseline matters, but so does remedy. When civilians are harmed, there must be investigation, transparency, and compensation. If AI makes investigations impossible—because you can’t reconstruct why a target was chosen—then you’re building impunity.

4) “Meaningful Human Control” (define it like you’d enforce it)

Walzer:

Everyone says “meaningful human control.” Define it so a third party could verify it.

Scharre:

Four elements:

- Understanding (what the AI is doing and why)

- Time (enough time to question and reject)

- Authority (real ability to say no, without penalty or rubber-stamp culture)

- Traceability (records that show the chain of reasoning and action)

O’Connell:

Add legality: humans must be able to evaluate proportionality and necessity. If the system cannot present information in a way that supports that judgment, it’s not meaningful.

Roff:

Add psychology: the interface matters. If the system is designed to nudge approval—confidence bars, urgency cues, “high priority”—then you’ve engineered moral surrender.

Kreps:

Add operations: control must survive real conditions—fatigue, overload, time pressure, fear. If it only works in lab calm, it’s not meaningful control.

Arpaio:

And add humanitarian thresholds: if uncertainty rises, the system must slow down or stop, not accelerate. Meaningful control includes the right to halt—built into the system.

5) R1 Synthesis (what they actually agree to do next)

Walzer:

I’m going to force a “shared minimum.” One thing you’d all require before any AI-enabled targeting system is deployed at scale.

Scharre:

Independent audit logs + testing under realistic conditions.

O’Connell:

A legal standard: no lethal use without provable human judgment that satisfies IHL requirements.

Roff:

A design requirement: interfaces that reduce automation bias and make dissent easy—friction to kill, not friction to pause.

Kreps:

Operational governance: clear doctrine, training, and accountability that matches the actual workflow—not PR language.

Arpaio:

Civilian protection mechanisms: transparency, investigation, and remedy—and a hard rule that uncertain targeting defaults to restraint.

Walzer:

Good. We have a core: verifiable human judgment, traceability, bias-resistant design, real-world governance, and civilian-centered safeguards. Now the fight becomes enforcement.

Topic 2 — Safety Controls vs Power Pressure

Theme: Can “safety brakes” survive real-world incentives—competition, war, secrecy, and state demand?

Moderator

Zeynep Tufekci (technology + institutions + power)

Five Participants

- Stuart Russell (AI safety; long-time critic of autonomous weapons)

- Dario Amodei (frontier model governance; deployment realities)

- Joanne Jang (model policy & safety; practical constraints + rollout decisions)

- Ben Buchanan (AI + national security; state incentives and strategy)

- Margaret Mitchell (AI ethics; evaluation, bias, and deployment risk)

3 Critical Questions (the spine of the episode)

- Are safety limits “real,” or just product settings?

What breaks first under pressure: refusal policies, capability caps, or oversight? - Who gets override authority—and when?

If a government claims “national security emergency,” what’s the line companies must not cross? - What’s the least-bad dual-use containment model?

Licensing, compute controls, escrowed weights, mandatory audits, secure enclaves, or international monitoring?

1) Opening Positions (everyone states their “non-negotiable”)

Tufekci (Moderator):

One sentence each: what’s the one safety principle you won’t trade away—even if it costs market share or triggers state anger?

Russell:

No system should be allowed to select and engage human targets without robust, enforceable human control—ever.

Amodei:

If the system is powerful enough to cause large-scale harm, you need measurable safety standards and a governance process that can say “no,” even to powerful customers.

Jang:

Safety can’t be vibes. It has to be operational: clear policies, eval gates, monitoring, and the ability to pause rollout when risk shifts.

Buchanan:

You can’t ignore state incentives. If democratic systems don’t set rules, the vacuum gets filled by secret programs and adversaries. Governance must be realistic.

Mitchell:

If you can’t evaluate harms, you can’t claim safety. Non-negotiable is transparent, repeatable evaluation and honest reporting of failure modes.

2) “Brakes” Under Pressure (what actually fails first?)

Tufekci:

Let’s get concrete. A powerful state says: “We need this capability. Now.” What breaks first?

Jang:

Two things break fast: scope creep (“just this one exception”) and silent policy drift (capabilities expand but documentation doesn’t). The failure isn’t a villain—it’s a thousand tiny “temporary” changes.

Amodei:

There’s also the competitive mirror: if you believe rivals will ship without brakes, you’re tempted to loosen yours. That’s why you need external commitments—standards you don’t control alone.

Russell:

But here’s the hard truth: if the incentive is strong enough, you can rationalize anything. That’s why some lines must be structural, not voluntary—like legal constraints and verifiable controls.

Buchanan:

And yet overly rigid rules can push everything into classified channels. Then you lose public accountability entirely. A workable approach is: allow limited use with auditability, not blanket permission.

Mitchell:

Auditability isn’t optional. Without logs and eval transparency, “trust us” becomes the policy. And “trust us” is what fails in wartime.

3) The Override Question (who has the keys?)

Tufekci:

Who should have override authority: the company, the state, an independent board, a court, an international body?

Buchanan:

States will insist they must have a say—especially in emergencies. The question is whether that say is bounded and reviewable. Courts and legislative oversight matter.

Russell:

I don’t trust emergency logic. Emergencies are when we do irreversible things. If override exists, it should be narrow, logged, and independently reviewable—and not for lethal autonomy.

Jang:

Companies also need internal structures that can resist pressure: clear escalation pathways, documented exceptions, and a culture where safety teams can actually stop a release.

Amodei:

Independent review is crucial, but it must be competent and fast enough to matter. Otherwise, decision-making collapses back to the loudest power center.

Mitchell:

And the public needs some visibility. Not the secret sauce—but the shape of risk: what was tested, what failed, what was mitigated, and what wasn’t.

4) Dual-Use: The Core Instability (can we contain it?)

Tufekci:

Same model can tutor kids or optimize killing. Is dual-use manageable—or inherently unstable?

Russell:

Some dual-use is manageable; lethal autonomy is not. If the best safety argument is “we’ll be careful,” you’ve already lost.

Amodei:

Containment has to be layered: capability evaluations, deployment constraints, monitoring, and restrictions on tool use. It’s not one magic lock; it’s many locks.

Jang:

And don’t forget the human layer: training, access control, and red-team testing. A model isn’t “safe” in isolation; it’s safe in a system.

Mitchell:

Also: measure the harms you’re not incentivized to see—bias, surveillance, repression, and indirect violence. Dual-use isn’t only bombs; it’s control.

Buchanan:

One more realism check: adversaries won’t adopt your ethics. So democracies need defensive competence while preventing runaway escalation. That tension is real.

5) R1 Synthesis (forced agreement: minimum viable guardrails)

Tufekci:

I want one “minimum guardrail package” you’d accept as a baseline for frontier models under geopolitical pressure.

Russell:

A clear red line: no autonomous lethal targeting, plus enforceable human-control requirements.

Amodei:

Mandatory safety eval gates before deployment, with external review for high-risk capability jumps.

Jang:

Continuous monitoring + incident reporting, and the ability to pause/roll back deployment quickly when risk changes.

Mitchell:

Transparent evaluation protocols, bias and misuse testing, and honest public reporting of limitations and failures.

Buchanan:

A governance structure that’s enforceable: audits, logged exceptions, and democratic oversight—so it doesn’t all move into secrecy.

Tufekci:

So the shared baseline is: red lines on lethal autonomy, measurable eval gates, rollback capacity, transparency on failures, and governance that doesn’t collapse into black-box secrecy. Good—now we can argue about how to enforce it internationally.

Topic 3 — Proof, Transparency, and Public Verification

Theme: When someone claims “AI was used in a strike,” what would it take to prove it—credibly, independently, and without trusting the actors involved?

Moderator

Eliot Higgins (Bellingcat; OSINT verification mindset)

Five Participants

- Hany Farid (digital/media forensics; authenticity & provenance)

- Cynthia Rudin (interpretable ML; accountability vs black boxes)

- Matt Tait (cybersecurity; logs, attribution, and operational reality)

- Joanna Bryson (AI governance + accountability; policy and ethics)

- Aviv Ovadya (information integrity; institutional trust and failure modes)

3 Critical Questions (the spine of the episode)

- What counts as proof that “Model X was used” in Operation Y?

Is it audit logs, procurement records, classified docs, system telemetry, or something like model “fingerprints”? - Can we build verification that survives adversaries and secrecy?

Watermarks, signed outputs, secure enclaves, hardware attestation, chain-of-custody logs—what actually holds up? - How do we design transparency without leaking operational secrets?

What can be public, what should be shared with independent auditors, and what must stay classified?

1) Opening Positions (everyone states the “hard reality”)

Higgins (Moderator):

One sentence each: when you hear “AI was used in the strike,” what’s your first reaction as a professional?

Farid:

My first reaction is: Show me the artifacts. Without provenance and a chain of custody, it’s just a story.

Rudin:

My first reaction is: Even if AI was used, can we explain the decision path? Because “used AI” isn’t the same as “AI decided.”

Tait:

My first reaction is: Attribution is hard. People underestimate how easy it is to spoof, sanitize logs, or hide tooling in a classified stack.

Bryson:

My first reaction is: Define “used.” Was it translation, targeting, threat scoring, route planning, or the authorization step?

Ovadya:

My first reaction is: What’s the incentive? Claims like this are often strategic—used to justify, intimidate, or shift blame.

2) Define “AI Was Used” (because sloppy language ruins verification)

Higgins:

Let’s pin it down. What are the categories of “AI involvement” we need to separate?

Bryson:

At least four:

- Decision support (analysis, summaries, threat scoring)

- Target development (identifying candidates)

- Target selection (choosing among candidates)

- Execution (weapon guidance, engagement timing)

Most public debate collapses all of that into one phrase.

Rudin:

And the accountability changes by layer. If AI is doing recommendation vs selection, the evidence we need—and the ethical burden—changes.

Farid:

Exactly. If someone claims “Model X picked targets,” you need different proof than if someone claims “Model X summarized intel.”

Tait:

Also: “Model X” rarely exists alone. Real systems are pipelines—data fusion, heuristics, human workflows, multiple models, proprietary tooling.

Ovadya:

Which is why propaganda thrives here. Vague claims can’t be falsified, so they spread.

3) What Would Count as Proof? (standards that don’t rely on trust)

Higgins:

Okay. If we wanted a credible public standard, what evidence actually matters?

Farid:

Three layers:

- Documentary evidence (contracts, procurement, internal comms)

- Technical evidence (telemetry, logs, model calls, timestamps)

- Forensic evidence (artifacts that survive tampering—signed records, hardware attestation)

One layer alone is usually not enough.

Tait:

And you must assume hostile conditions: people will launder logs, run models through intermediaries, or label everything “classified.” So you need tamper-evident systems—ideally built before the incident.

Rudin:

From the ML side: “model fingerprints” are tempting, but not easy. Outputs aren’t reliable signatures. What you want are verifiable records of model invocation and the context it was given.

Bryson:

Plus: proof should show role, not just presence. If the model touched the workflow but didn’t influence the decision, that’s materially different.

Ovadya:

And remember public legitimacy: people won’t accept “trust the ministry” or “trust the vendor.” The verification has to be explainable to non-experts.

4) Can Verification Be Designed In? (so we’re not guessing afterward)

Higgins:

Let’s talk architecture. What would “verifiable AI” look like in high-stakes security contexts?

Tait:

Use secure enclaves + hardware attestation so you can prove: “This exact software ran, with this policy, at this time,” and logs can’t be rewritten quietly.

Farid:

Add cryptographic signing and chain-of-custody for data and outputs—so every step leaves a tamper-evident trace.

Rudin:

Add structured decision records: what signals mattered, what uncertainty was reported, what alternatives were considered. Without this, you can’t audit proportionality or reasonableness.

Bryson:

And add policy: mandatory incident disclosure to an independent reviewer, like aviation. Not every detail public—but reviewable.

Ovadya:

But the biggest barrier is incentives. States and firms often prefer ambiguity because it preserves freedom of action and blame-shifting.

5) The Secrecy Dilemma (transparency without giving away tactics)

Higgins:

Where’s the line? What can be transparent without exposing operational secrets?

Bryson:

You can publish governance facts: what categories of use are allowed, what red lines exist, what audits occur, what failure reporting looks like.

Rudin:

You can share evaluation results and the kinds of failure modes you tested—without revealing exact data sources or targets.

Tait:

You can create a tiered model: public summary, confidential auditor access, and tightly controlled classified annexes. The point is: someone independent must see the truth.

Farid:

And you can allow post-incident verification of claims without revealing tactics—if the logs are designed correctly.

Ovadya:

The key is credibility. If the public sees a closed loop of “we investigated ourselves,” trust collapses.

6) R1 Synthesis (forced agreement: minimum viable verification)

Higgins:

Give me a minimum package. If a government or contractor says “AI wasn’t used” or “AI was used responsibly,” what must exist for that statement to be credible?

Farid:

Tamper-evident provenance: signed logs + chain of custody.

Tait:

Independent access: a system where logs can be audited externally, not just internally.

Rudin:

Decision trace: a record of what the model did, its uncertainty, and how it influenced decisions.

Bryson:

Clear definitions: standardized categories of “AI involvement,” so claims can be tested.

Ovadya:

Public reporting norms: meaningful disclosure that allows society to evaluate risk—without relying on rumors.

Higgins:

So the shared baseline is: define the kind of AI involvement, record it in tamper-evident ways, let independent reviewers audit it, preserve decision traceability, and disclose enough to maintain legitimacy. Now we can actually argue about treaties and enforcement without living in rumor-land.

Topic 4 — Information War, Propaganda, and De-Escalation

Theme: Does AI reduce fear by spreading shared understanding—or does it become the most scalable propaganda and surveillance engine ever built?

Moderator

Anne Applebaum (propaganda systems + authoritarian dynamics)

Five Participants

- Renée DiResta (influence operations + platform manipulation)

- Kate Starbird (networked misinformation; how narratives spread)

- P.W. Singer (future war + information conflict)

- Samantha Power (diplomacy + human rights / atrocity prevention lens)

- Timnit Gebru (power, data, and institutional bias; structural critique)

3 Critical Questions (the spine of the episode)

- AI as de-escalation tool: Can “shared facts + translation + context” actually humanize enemies and reduce violence?

- AI as control tool: When states use AI for surveillance/censorship in war, where’s the line between “public safety” and “manufactured consent”?

- Who can be trusted to run “truth infrastructure”? Governments, platforms, NGOs, open-source collectives—or no one?

1) Opening Positions (everyone states the uncomfortable truth)

Applebaum (Moderator):

One sentence each: what’s the most dangerous illusion people have about AI in information war?

DiResta:

The illusion that more information equals more truth—AI can flood the zone faster than we can think.

Starbird:

The illusion that misinformation is “bad content” you can remove—when it’s really social identity and networks.

Singer:

The illusion that this is new—information war is old; AI just makes it faster, cheaper, and deniable.

Power:

The illusion that civilians are spectators—information war is often the main battlefield, shaping atrocities and escalation.

Gebru:

The illusion that “neutral AI” exists—models reflect power, data, and incentives, and those aren’t neutral.

2) Can AI Actually De-Escalate? (shared understanding vs engineered narratives)

Applebaum:

The transcript’s big hope is: if people on both sides ask the same AI, they’ll see the same truth. Is that realistic?

Power:

In principle, yes—translation and context can reduce dehumanization. But in practice, the question is: who controls the model and the training signals? Trust collapses if it feels like a foreign narrative machine.

DiResta:

Also, even if the AI is honest, it can be weaponized by selective quoting. People won’t consume it like a textbook. They’ll turn it into clips, memes, and “gotchas.”

Starbird:

And communities interpret “truth” through group belonging. If your identity is threatened, you don’t accept facts—you reject the messenger. AI doesn’t change that. It may even intensify it by making “counter-evidence” infinite.

Singer:

The strategic point: states don’t necessarily want de-escalation. They want cohesion and compliance at home. AI enables constant, personalized mobilization messaging.

Gebru:

And “same AI gives same answer” is not guaranteed. Different regions get different products, different policy layers, different censorship rules. “Shared truth” becomes segmented truth.

3) Surveillance and Wartime Censorship (public safety vs manufactured consent)

Applebaum:

Let’s go straight to the hard question: In wartime, states say “We must monitor and censor for safety.” Where’s the line?

Gebru:

The line is power: once surveillance expands, it rarely shrinks. AI makes surveillance cheap and automated, and it becomes normal. The public gets used to being watched.

Power:

I agree. The danger is that “security” becomes permission to silence dissent, journalists, and minority groups. The line should be: censorship must be narrow, time-limited, and independently reviewable. Otherwise it’s oppression.

DiResta:

And there’s a second line: “narrative enforcement.” Platforms and states can use AI to throttle topics, label them, or bury them—without visibly “censoring.” It’s soft control.

Starbird:

Plus, visibility is not neutrality. If AI decides which posts spread and which don’t, it shapes public reality. That’s governance by ranking.

Singer:

From a defense lens, governments will do it anyway under stress. The question becomes: do democracies build rules and oversight, or do they copy authoritarian tactics and justify it as necessity?

4) The Trust Problem (who can run “truth infrastructure”?)

Applebaum:

If AI is going to “reduce propaganda,” someone has to run the system. Who is credible enough?

Power:

International bodies can help, but they’re slow and politically constrained. NGOs can help, but they’re attacked as biased. Ideally, you want multi-stakeholder governance with auditability.

DiResta:

We should assume no single actor is trusted. So build systems that are verifiable, where claims can be checked and sources traced. Not “trust me,” but “here’s how to verify.”

Starbird:

But verification doesn’t automatically win. Communities still choose narratives that fit their identity. We need civic resilience, not only technical fixes.

Gebru:

Also: if the model is proprietary, you can’t inspect it. Trust requires transparency—about training data, policies, and who benefits.

Singer:

And you need redundancy. In war, systems get attacked. The “truth layer” must be resilient like infrastructure.

5) What a Realistic “De-Escalation AI” Might Look Like (if it’s possible at all)

Applebaum:

If we attempted this responsibly, what would it look like?

Power:

Tools that amplify humanitarian information: safe corridors, verified casualty reporting, multilingual briefings that humanize civilians.

DiResta:

A “context engine” that shows provenance: Where did this come from? How reliable is it? What’s missing?—and it must work at the speed of social media.

Starbird:

Community-embedded messengers. People trust humans more than systems. AI should support trusted local mediators, not replace them.

Gebru:

Independent audits and equity: ensure it doesn’t systematically silence certain dialects, communities, or political positions.

Singer:

And clear boundaries: the moment it becomes a tool for targeting individuals or suppressing dissent, it fails the mission.

6) R1 Synthesis (forced agreement: minimum safeguards for “truth AI”)

Applebaum:

Give me the minimum safeguards that must exist before anyone claims “AI will reduce propaganda and de-escalate conflict.”

DiResta:

Provenance and friction against virality: traceable sources + limits on spammy amplification.

Starbird:

Network literacy: design around community dynamics—support trusted intermediaries and track how narratives move.

Singer:

Resilience and adversarial planning: assume attack, spoofing, and information ops from day one.

Power:

Human rights guardrails: narrow surveillance, oversight, and protections for journalists/civilians.

Gebru:

Transparency and accountability: audits, bias testing, and clear governance—no black-box “truth ministry.”

Applebaum:

So the baseline is: provenance, community-aware design, adversarial resilience, human-rights limits, and transparent governance. Without those, “AI for peace” becomes AI for control.

Topic 5 — Escalation Speed and Human Consequences

Theme: When AI compresses time and increases “precision,” does it prevent war—or make catastrophe more likely and more psychologically corrosive?

Moderator

Rosa Brooks (war + law + the human reality of modern conflict; sharp but humane)

Five Participants

- Thomas Schelling (deterrence + escalation theory; “the logic of threats”)

- Daniel Kahneman (decision-making under uncertainty; cognitive failure modes)

- Paul Scharre (operational tempo + autonomy risks)

- Jonathan Shay (moral injury / trauma; Achilles in Vietnam)

- Rachel Kleinfeld (conflict/violence dynamics; prevention + escalation pathways)

3 Critical Questions (the spine of the episode)

- Speed kills—or saves?

Does AI-driven “decision advantage” reduce violence through precision, or increase accidental escalation by shrinking human deliberation time? - What are the predictable failure modes?

False positives, adversarial manipulation, feedback loops, miscalibrated confidence, automation bias—what breaks first under stress? - What happens to humans when machines mediate killing?

How do moral injury, responsibility diffusion, and political accountability change when decisions become “dashboard-driven”?

1) Opening Positions (everyone states the core fear)

Brooks (Moderator):

One sentence each: what is your biggest fear about AI’s role in escalation?

Schelling:

My fear is involuntary escalation—situations where no one wants war, but the process drifts into it.

Kahneman:

My fear is false certainty: decision-makers will trust a confident system more than their own doubts.

Scharre:

My fear is speed and complexity: humans will be unable to understand the system fast enough to control it.

Shay:

My fear is moral corrosion—killing becomes administratively clean, but psychologically and socially poisonous.

Kleinfeld:

My fear is that AI makes violence feel inevitable and optimized, reducing investment in prevention and diplomacy.

2) Speed: Advantage or Trap? (the tempo problem)

Brooks:

Militaries chase “decision advantage.” In plain language: faster than the other side. Does that prevent war or manufacture it?

Schelling:

Speed can deter—but it can also create traps. If both sides speed up, you reduce the time for signals, negotiation, and face-saving exits. That’s when accidents become destiny.

Scharre:

And “faster” often means “more automated.” The scary part is not speed alone; it’s speed plus systems that humans can’t fully interrogate in real time.

Kahneman:

There’s also the psychological effect: urgency narrows thinking. Under time pressure, people accept simpler stories and defer to authority—especially a machine that appears objective.

Kleinfeld:

War is often escalation by misunderstanding. If AI shortens the space for interpretation, you may remove the human ability to say, “Wait—what if we’re misreading this?”

Shay:

And when speed becomes a virtue, hesitation becomes a vice. That pressures humans to comply, not reflect. Later, the moral weight returns—often as injury.

3) Failure Modes Under Stress (how things actually go wrong)

Brooks:

Let’s list the ways this fails in the real world. Not sci-fi. Normal failure.

Scharre:

Start with automation bias: humans over-trust system outputs, especially in complex environments. Then data drift: conditions change, models degrade. Then confidence miscalibration: the system looks certain when it shouldn’t.

Kahneman:

Add availability and framing: decision-makers overweight recent attacks and interpret ambiguous signals as threats. AI can amplify this by presenting patterns that “feel inevitable.”

Schelling:

And add adversaries: spoofing and manipulation. If you can trick an opponent’s system into seeing a threat, you can provoke them into actions that escalate.

Kleinfeld:

Plus domestic politics: when leaders can claim “the system said so,” it becomes easier to justify force and harder to back down.

Shay:

And the human cost gets hidden until it explodes: operators feel detached during action, then shattered afterward—especially when civilians die and responsibility feels both everywhere and nowhere.

4) Precision Isn’t Morality (the “clean strike” illusion)

Brooks:

The transcript talks about “precision” escalating historically—smaller blast radius, fewer bystanders. Is more precision automatically more ethical?

Schelling:

Precision changes the bargaining environment. It can make violence seem cheaper and therefore more usable—lowering the threshold for action.

Kleinfeld:

Yes. If leaders believe strikes are “surgical,” they may choose violence earlier rather than diplomacy. That’s escalation by confidence.

Scharre:

Also: precision depends on correct identification. If the target is misidentified, precision just means you hit the wrong thing perfectly.

Kahneman:

Humans systematically underestimate uncertainty. Precision tech increases the temptation to believe uncertainty has been solved.

Shay:

And “clean” killing can be psychologically dirtier. When killing is reduced to a procedure, society loses the friction that forces moral reckoning.

5) Human Consequences (moral injury + responsibility diffusion)

Brooks:

If an AI system recommends, a human approves, and civilians die—who carries it?

Shay:

Operators often carry it anyway. Even when they’re told, “You followed protocol,” the conscience doesn’t accept procedural innocence.

Kahneman:

Organizations diffuse responsibility by design. When many small actions lead to a tragedy, no one feels fully responsible—and that is how tragedies repeat.

Scharre:

Which is why traceability matters. But traceability doesn’t automatically create accountability—culture must reward refusal and slowing down.

Kleinfeld:

And at the political level, “AI said so” becomes a shield. Democracies are weakened when citizens can’t locate responsibility.

Schelling:

Strategically, that’s dangerous: if no one can credibly signal restraint or accept blame, escalation becomes harder to stop.

6) R1 Synthesis (forced agreement: what must be true to avoid catastrophe)

Brooks:

Give me the minimum conditions that must exist before you allow AI to accelerate wartime decision cycles.

Schelling:

You need deliberation space—mechanisms that slow escalation and allow signaling and off-ramps.

Kahneman:

You need systems that display uncertainty honestly, and training that fights automation bias—humility engineered into the workflow.

Scharre:

You need limits on automation at high stakes, plus monitoring and testing under real conditions—no “black box at war speed.”

Shay:

You need moral and psychological safeguards: accountability that’s real, plus care for people forced to carry these decisions.

Kleinfeld:

You need prevention investment: diplomacy, conflict de-escalation channels, and violence interruption—so “optimized force” isn’t the default tool.

Brooks:

So the baseline is: preserve off-ramps, engineer uncertainty and humility, limit high-stakes automation, protect human moral health, and invest in prevention. Without these, AI doesn’t “manage war”—it accelerates it.

Final Thoughts by Bruce Schneier

If there’s one idea to take away, it’s this: in war, the most dangerous system is the one that nobody can explain, nobody can audit, and everybody relies on anyway.

We’ve learned this lesson in cybersecurity for decades. Complexity doesn’t just create bugs—it creates unknown unknowns. And when AI systems are dropped into high-stakes conflict environments, the failures won’t look like a dramatic robot uprising. They’ll look like ordinary institutional failure: overconfidence, bad data, automation bias, pressure to act fast, and a chain of “reasonable” steps that ends in something irreversible.

So what do we do?

First, define the claim. “AI was used” is meaningless without saying where: translation, intel triage, target development, target selection, or execution. Each has different risks and different accountability.

Second, demand verifiability. If a government or contractor wants public trust, there must be tamper-evident logs, audit trails, and independent review—at least for the parts of the system where AI influences life-and-death decisions. Secrecy can protect tactics, but secrecy cannot be an excuse for zero accountability.

Third, slow down the loop. In escalation dynamics, time is safety. You build procedures that create friction: cross-checks, uncertainty reporting, and real authority for humans to stop the process—without being punished for slowing it down.

And finally, don’t confuse precision with morality. Hitting the wrong target perfectly is not progress. A clean dashboard does not mean a clean conscience. If AI makes violence feel easier, it lowers the threshold for force—and that’s a strategic risk, not just an ethical one.

We can’t uninvent this technology. But we can choose whether it becomes a machine for faster mistakes—or a system that forces more truth, more restraint, and more accountability.

That’s the test. And it’s not a technical test. It’s a governance test.

Short Bios:

Topic 1 — Autonomy & Accountability

Moderator — Paul Scharre

Former U.S. Army Ranger and defense policy expert focused on autonomous weapons and escalation risks. Author of Army of None.

Rebecca Crootof

Law professor who studies how international law applies to autonomous and AI-enabled weapons. Focuses on accountability when machines influence force decisions.

Michael C. Horowitz

Political scientist and national security expert on how militaries adopt new technologies. Researches how AI changes deterrence and warfighting behavior.

Heather Roff

Ethicist specializing in autonomy in weapons systems and the moral limits of machine decision-making. Works at the intersection of AI policy and security.

Stuart Russell

AI researcher known for work on AI safety and value alignment. Warns about loss of human control as systems grow more capable.

Sarah Kreps

Scholar on technology, politics, and conflict, including drones and AI in military operations. Studies how public legitimacy and oversight change with new tools.

Topic 2 — Safety Brakes vs Power Pressure

Moderator — Helen Toner

AI governance researcher focused on policy, incentives, and risk management. Known for clear, non-hype framing of frontier AI issues.

Dario Amodei

AI leader focused on building advanced models while reducing catastrophic risks. Often speaks about safety tradeoffs and responsible deployment.

Yoshua Bengio

Pioneering deep learning researcher and major voice on AI safety and oversight. Advocates for strong guardrails as capability increases.

Timnit Gebru

Researcher known for work on bias, power, and accountability in AI systems. Pushes for transparency and scrutiny of who benefits and who is harmed.

Jason Matheny

National security and technology policy expert with experience across government and research. Focuses on AI risk, preparedness, and strategic stability.

Gillian Hadfield

Legal scholar working on governance systems that can keep pace with fast-moving technology. Focuses on practical “rules + enforcement” design, not just principles.

Topic 3 — Proof & Verification

Moderator — Bruce Schneier

Security technologist known for explaining risk and trust in plain language. Focuses on what can be audited, verified, and held accountable.

Hany Farid

Digital forensics expert who develops methods to authenticate media and detect manipulation. Known for practical, evidence-first approaches.

Susan Landau

Cybersecurity and privacy scholar focused on how technical systems affect rights and governance. Strong on oversight, audits, and institutional safeguards.

Matt Blaze

Computer security researcher known for work on cryptography and system reliability. Focuses on how complex systems fail under real-world pressure.

Katie Moussouris

Security industry leader known for building vulnerability disclosure and bug bounty programs. Brings a “how we fix this in practice” mindset.

Alec Stamos

Cybersecurity expert with experience defending platforms against coordinated attacks. Focuses on attribution, incident response, and information integrity.

Topic 4 — Information War, Propaganda, and De-Escalation

Moderator — Anne Applebaum

Historian and journalist who studies propaganda and authoritarian systems. Sharp at connecting narratives, institutions, and power.

Renée DiResta

Researcher on influence operations and how narratives get engineered online. Explains manipulation tactics clearly and concretely.

Kate Starbird

Professor who studies how misinformation spreads through networks and communities. Focuses on the social mechanics, not just “bad content.”

P.W. Singer

Analyst on modern conflict and emerging technologies in war. Known for translating complex security issues for broad audiences.

Samantha Power

Diplomacy and human rights leader focused on civilian protection and atrocity prevention. Brings a moral and policy lens to conflict escalation.

Timnit Gebru

Researcher known for examining power, bias, and accountability in AI systems. Highlights structural risks when AI becomes a tool of control.

Topic 5 — Escalation Speed & Human Consequences

Moderator — Rosa Brooks

Legal scholar and former defense official focused on the realities of modern warfare. Strong at bridging strategy, ethics, and human impact.

Thomas Schelling

Nobel Prize–winning strategist known for deterrence and escalation theory. Helped define how coercion and bargaining shape conflict.

Daniel Kahneman

Nobel Prize–winning psychologist who studied judgment under uncertainty. Known for explaining why humans misread risk and over-trust “confident” answers.

Paul Scharre

Defense analyst focused on autonomy, operational tempo, and escalation risk. Clear-eyed about how systems behave under pressure, not in demos.

Jonathan Shay

Psychiatrist known for work on combat trauma and moral injury. Connects war decisions to the long psychological cost carried by humans.

Rachel Kleinfeld

Expert on conflict, political violence, and prevention strategies. Focuses on practical pathways to reduce escalation before it turns into war.

Leave a Reply