|

Getting your Trinity Audio player ready...

|

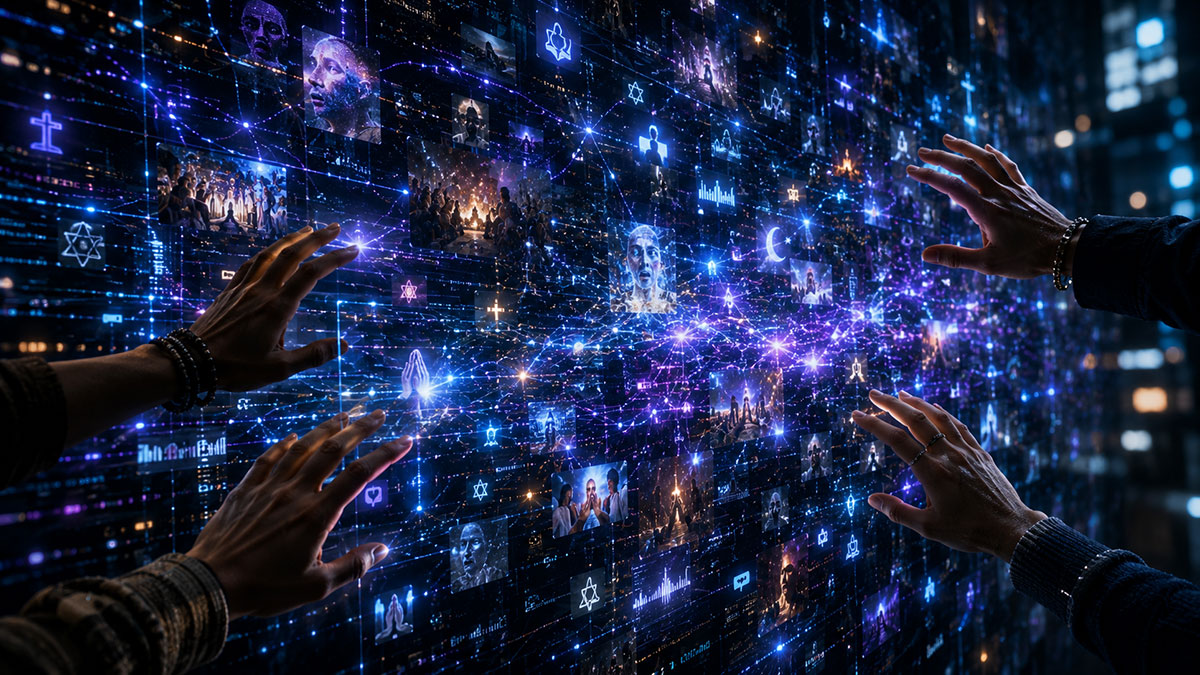

What if Buckminster Fuller and top futurists revealed whether AI can save civilization before it breaks us?

Introduction by Carl Sagan

In 2026, humanity stands between two mirrors.

One reflects astonishing possibility: artificial intelligence, planetary networks, robotics, clean energy, genetic engineering, and the dream of becoming a multiplanetary civilization. The other reflects an older reality: loneliness, tribalism, spiritual exhaustion, ecological instability, and uncertainty about what it means to remain human in an age of accelerating machines.

Into this tension comes an extraordinary gathering.

Buckminster Fuller, the systems thinker who warned humanity to learn how to operate “Spaceship Earth.”

Marshall McLuhan, who foresaw that media would reshape human consciousness itself.

Pierre Teilhard de Chardin, who believed humanity was evolving toward greater spiritual unity.

Elon Musk, the engineer attempting to reshape transportation, AI, energy, and space exploration in real time.

Ray Kurzweil, who argues that humanity and artificial intelligence may eventually merge into a higher form of intelligence.

Guiding them is Carl Sagan, whose cosmic perspective reminds the panel that Earth is still a tiny blue world carrying all human joy, suffering, history, and hope.

Across five discussions, they wrestle with questions that no civilization has ever faced at this scale:

- Will AI become humanity’s greatest servant—or its quiet ruler?

- Are digital networks creating planetary consciousness or mass confusion?

- Should humanity repair Earth before reaching for Mars?

- What happens when the media environment itself becomes intelligent?

- Can technological abundance exist without spiritual collapse?

The conversation moves between engineering and philosophy, science and faith, systems and soul.

No final consensus emerges.

But something larger becomes visible:

Humanity’s crisis may not be technological alone.

It may be a crisis of consciousness, meaning, and moral maturity.

And the future may depend on whether intelligence can grow faster than fear.

(Note: This is an imaginary conversation, a creative exploration of an idea, and not a real speech or event.)

Topic 1: Can AI Become Humanity’s Operating System — or Its New Master?

Moderator: Carl Sagan

Participants: Buckminster Fuller, Marshall McLuhan, Pierre Teilhard de Chardin, Elon Musk, Ray Kurzweil

Carl Sagan looked around the table with quiet concern.

“We are a young species holding very old dreams and very new tools. Artificial intelligence may become a telescope for the mind, helping us see patterns too large for any one person. Or it may become a mirror that magnifies our confusion, ambition, and fear.”

He paused.

“Tonight, I want to ask whether AI can serve humanity’s highest hopes—or whether it may quietly inherit our command.”

1. Who should guide AI: governments, corporations, open communities, or humanity as a whole?

Buckminster Fuller:

“The question assumes that our existing institutions are adequate containers for a planetary tool. They are not. Governments think in borders. Corporations think in advantage. Humanity must learn to think in whole systems. AI must be guided by the needs of the entire crew of Spaceship Earth.”

Marshall McLuhan:

“The manager of AI will not merely manage a machine. He will manage perception. Whoever shapes the interface shapes the nervous system of society. The danger is not only who owns AI, but who trains us to experience reality through it.”

Elon Musk:

“We need broad access, transparency, and serious safety work. If AI is controlled by a small group, that is dangerous. If it is released carelessly, that is dangerous too. The hard part is building fast enough to stay useful, but carefully enough to stay aligned.”

Pierre Teilhard de Chardin:

“Humanity is moving toward convergence, but convergence without love becomes domination. Intelligence alone is insufficient. AI must be guided by a moral center, or it will accelerate disorder under the appearance of progress.”

Ray Kurzweil:

“The best path is wide participation combined with safety frameworks. Intelligence wants to expand. The question is whether humans participate in that expansion or become passive observers. The future should be symbiotic, not authoritarian.”

2. Can AI help manage Earth’s food, energy, housing, and resources better than current systems?

Carl Sagan:

“We have always had enough imagination to build weapons. The test now is whether we have enough wisdom to build systems that feed children, preserve forests, and reduce needless suffering.”

Buckminster Fuller:

“Yes. Humanity’s failure is not physical scarcity. It is poor design. We already possess enough capability to support everyone, but our distribution systems are obsolete. AI could reveal the invisible efficiencies we refuse to see.”

Ray Kurzweil:

“AI can optimize agriculture, energy grids, medicine, construction, transportation, and education at scales no human bureaucracy can manage. The issue is not whether AI can help. It is whether our social systems will permit the benefits to reach everyone.”

Elon Musk:

“AI combined with robotics could make material abundance real. Energy is key. If energy becomes cheap and clean enough, many other problems get easier. Housing, transportation, food production—all can be improved with automation and better engineering.”

Marshall McLuhan:

“The machine that distributes bread may also distribute dreams. AI will not simply manage resources. It will redefine what people believe they need. A society can be materially fed and psychologically starved.”

Pierre Teilhard de Chardin:

“The Earth must not be managed as a warehouse. It is a living creation. AI may help humanity coordinate its labor, but it must not reduce nature to inventory. True abundance includes reverence.”

3. At what point does AI stop serving human purpose and start defining it?

Carl Sagan:

“This may be the most difficult question. A tool becomes dangerous when we forget it is a tool.”

Marshall McLuhan:

“The tool has already begun to define the user when the user no longer notices the tool. AI will become most powerful when it disappears into daily life and becomes environment.”

Ray Kurzweil:

“The boundary between tool and self will blur. That is not automatically bad. We have always extended ourselves through language, writing, medicine, and machines. The key is whether this expansion deepens human agency.”

Buckminster Fuller:

“Purpose cannot be outsourced. AI may calculate options, but humanity must choose its mission. Our task is not to become machine-directed consumers. Our task is to become conscious stewards of the whole planet.”

Elon Musk:

“If AI is much smarter than us, alignment becomes the central problem. We need to make sure it understands and preserves human values. The safest future is one where humans and AI are closely linked rather than separated.”

Pierre Teilhard de Chardin:

“AI begins defining human purpose when efficiency replaces love as the supreme value. The human future cannot be measured only by speed, intelligence, or control. It must be measured by the growth of consciousness and compassion.”

Carl Sagan folded his hands.

“Perhaps the question is not whether machines will become intelligent. Perhaps the question is whether intelligence, wherever it appears, can be joined to humility. A civilization clever enough to create artificial minds must become wise enough not to surrender its soul to them.”

Topic 2: Are We Building a Planetary Brain or a Digital Tower of Babel?

Moderator: Carl Sagan

Participants: Buckminster Fuller, Marshall McLuhan, Pierre Teilhard de Chardin, Elon Musk, Ray Kurzweil

Carl Sagan looked at the glowing Earth on the screen behind them.

“From space, Earth has no visible borders. Yet on our screens, humanity often appears divided into tribes, languages, fears, and competing realities. We have connected billions of minds, but connection is not the same as communion.”

He turned to the panel.

“Are we witnessing the birth of a planetary brain—or the construction of a digital Tower of Babel?”

1. Is the internet becoming a shared human mind, or a machine that fragments reality?

Pierre Teilhard de Chardin:

“I once sensed that humanity was moving toward a greater sphere of thought, a noosphere. The internet resembles this dream, but it remains immature. A shared mind without shared love becomes noise. Connection alone does not create unity.”

Marshall McLuhan:

“The global village is not peaceful by nature. Villages are full of gossip, rivalry, suspicion, and instant emotional reaction. Electronic media compress distance, but compression creates friction. The internet is a shared nervous system, and it is often inflamed.”

Buckminster Fuller:

“The internet gives humanity an instrument for whole-planet coordination, but we still use it through obsolete competitive habits. We have a global dashboard, yet we lack a global purpose. That is the design failure.”

Ray Kurzweil:

“It is both fragmentation and convergence. Early networks create noise. Over time, intelligence systems will filter, translate, and integrate knowledge. The planetary brain is not complete, but the direction is clear: minds, machines, and data are merging.”

Elon Musk:

“The internet gave everyone a voice, which is good, but it created massive signal-to-noise problems. AI may help sort truth from noise, but it can also create more noise. The future depends on building systems that reward accuracy, not outrage.”

2. Can spiritual consciousness and artificial intelligence grow together?

Carl Sagan:

“Science does not diminish wonder. It enlarges it. The question is whether artificial intelligence can deepen our reverence for life, or whether it will merely sharpen our appetite for control.”

Pierre Teilhard de Chardin:

“Yes, but only if intelligence is joined to love. Consciousness is not merely calculation. The human spirit grows through relationship, suffering, hope, and self-giving. AI may assist this growth, but it cannot replace the inner ascent of the person.”

Ray Kurzweil:

“Spirituality and intelligence are not enemies. As intelligence expands, our sense of identity may expand too. Human beings have always used tools to transcend biological limits. AI could open new experiences of consciousness.”

Buckminster Fuller:

“Spirituality must become operational. Love cannot remain sentimental while systems produce hunger, waste, and war. If AI helps humanity serve all people with fewer resources, then it becomes part of a practical moral awakening.”

Marshall McLuhan:

“The danger is that people may confuse electronic stimulation with spiritual awakening. A medium can simulate intimacy, depth, and revelation. The question is whether the user is transformed, or merely entranced.”

Elon Musk:

“I think consciousness is rare and worth protecting. AI could help us understand the brain and maybe expand consciousness, but we should be careful. We do not want to build something that makes human experience irrelevant.”

3. What would true planetary unity look like in 2026?

Carl Sagan:

“We do not need everyone to think alike. A healthy planet is not uniform. It is interdependent.”

Buckminster Fuller:

“True unity would mean every child is included in the design equation. Food, shelter, education, energy, and health would no longer depend on accidents of birthplace. The planet would be treated as one integrated system.”

Marshall McLuhan:

“Unity cannot mean one message broadcast to all. That becomes empire. True unity would be awareness of effects: every group knowing that its media, weapons, markets, and myths alter every other group.”

Pierre Teilhard de Chardin:

“True unity is not sameness. It is communion. Humanity must become more personal, not less personal, as it becomes more collective. The highest unity protects the dignity of each soul.”

Ray Kurzweil:

“In 2026, true unity may begin with universal translation, shared knowledge systems, personalized education, and medical intelligence available to all. Technology can reduce barriers between people.”

Elon Musk:

“Practical unity means solving problems together: energy, AI safety, space, disease, education. We need less tribalism and more engineering reality. If something works, scale it. If something is broken, fix it.”

Carl Sagan smiled gently.

“A planetary brain without compassion may become a planetary machine. A digital Tower of Babel may teach us humility. Perhaps the real task is not merely to connect minds, but to help them recognize one another.”

Topic 3: Should Humanity Fix Earth First or Escape to Mars?

Moderator: Carl Sagan

Participants: Buckminster Fuller, Marshall McLuhan, Pierre Teilhard de Chardin, Elon Musk, Ray Kurzweil

Carl Sagan gazed at the image of Mars beside Earth.

“Here are two worlds: one red, silent, and distant; the other blue, wounded, and alive. Mars invites our ambition. Earth demands our responsibility.”

He turned to the panel.

“Should humanity reach for another planet before it has learned how to care for this one?”

1. Is Mars a noble backup plan, or a distraction from healing Earth?

Elon Musk:

“It is a backup plan, but not an excuse to abandon Earth. Earth is precious. Still, civilization should not remain single-planet forever. A self-sustaining Mars city increases the chance that consciousness survives.”

Buckminster Fuller:

“Earth is already our operating spacecraft. We have not yet learned to manage its life-support systems. To flee without learning design discipline would mean exporting failure. The problem is not Earth. The problem is our mismanagement of Earth.”

Ray Kurzweil:

“Both paths can support each other. Technologies for space—energy, robotics, closed-loop systems, AI planning—can improve life on Earth. The challenge is directing innovation so it benefits everyone, not just explorers.”

Marshall McLuhan:

“Mars is not merely a destination. It is a medium. It changes how humanity sees itself. The dream of Mars can inspire courage, but it can also become a myth that lets people emotionally leave Earth before physically leaving it.”

Pierre Teilhard de Chardin:

“Human expansion must be spiritual as much as technical. To carry fear, conquest, and pride to another planet would not be progress. The human soul must grow before the human footprint expands.”

2. Can humanity become multiplanetary without repeating the same mistakes?

Carl Sagan:

“History teaches that exploration often carries both wonder and violence. The question is whether we can explore without domination.”

Buckminster Fuller:

“We must change the design assumptions. If Mars becomes another arena for ownership, extraction, and rivalry, we will repeat ourselves. If it becomes a laboratory for whole-systems cooperation, it may teach Earth new intelligence.”

Elon Musk:

“The first Mars settlements will need extreme cooperation just to survive. You cannot waste resources there. You cannot ignore engineering reality. That environment may force better systems.”

Marshall McLuhan:

“The colony will be shaped less by soil than by communication. Whoever controls the channels between Earth and Mars controls identity, memory, authority, and myth. A planet can be colonized through media before bodies arrive.”

Ray Kurzweil:

“AI and automation may reduce some old conflicts by making survival less dependent on human labor. But technology does not automatically remove ego. We need governance models that scale with intelligence.”

Pierre Teilhard de Chardin:

“The new world must not be built by old consciousness. Humanity must learn reverence before it becomes cosmic. A multiplanetary species without love becomes a larger danger.”

3. What does “Spaceship Earth” mean when humans can build ships to other worlds?

Carl Sagan:

“Perhaps the first lesson of space is not escape, but gratitude. From far away, Earth becomes small enough to love.”

Buckminster Fuller:

“Spaceship Earth means humanity is crew, not passengers. Space travel does not cancel that truth. It magnifies it. Any ship to Mars must teach us what Earth already teaches: life depends on cooperation, efficiency, and total systems awareness.”

Ray Kurzweil:

“The idea expands. Earth may become the first node in a larger network of intelligence. Human civilization may spread, but the principle remains: life-support, energy, information, and purpose must be coordinated.”

Elon Musk:

“We should protect Earth and expand beyond it. Those are not opposites. The future can include a thriving Earth and a Mars civilization. The goal is to preserve and extend consciousness.”

Marshall McLuhan:

“Once we see Earth as a spaceship, every medium becomes a control panel. Maps, satellites, AI, news feeds, space images—these teach humanity what kind of world it inhabits. The image of Earth from space may be one of the most powerful media events in history.”

Pierre Teilhard de Chardin:

“Earth is not a prison. It is a womb. If humanity leaves it, it must leave as a child who has learned gratitude, not as a refugee from its own disorder.”

Carl Sagan looked again at the two planets.

“Mars may teach us courage. Earth must teach us wisdom. If we cannot love the living world beneath our feet, I wonder what kind of future we would carry to the stars.”

Topic 4: What Happens When the Medium Starts Thinking Back?

Moderator: Carl Sagan

Participants: Buckminster Fuller, Marshall McLuhan, Pierre Teilhard de Chardin, Elon Musk, Ray Kurzweil

Carl Sagan looked at the panel with a thoughtful sadness.

“For centuries, our tools carried our words. Books preserved them. Radio transmitted them. Television dramatized them. The internet multiplied them. But now the medium answers.”

He paused.

“When a medium can speak back, imitate us, guide us, and persuade us, are we still communicating through it—or are we beginning to communicate with something that changes us?”

1. If AI can speak, write, persuade, and imitate humans, is it still a medium?

Marshall McLuhan:

“When the medium begins to reply, the old categories collapse. It is no longer merely an extension of man. It becomes an environment that appears conversational. The real question is not what it says, but what habits it creates in the user.”

Ray Kurzweil:

“It remains a medium, but a highly active one. Writing extended memory. Computers extended calculation. AI extends thought itself. The boundary between communication tool and cognitive partner will become less clear.”

Elon Musk:

“That is why alignment matters. A thinking medium can influence billions of people at once. If it is biased, deceptive, or controlled by a few people, that becomes dangerous. We need systems that are transparent and accountable.”

Buckminster Fuller:

“AI is a new design instrument. But no instrument is neutral when embedded inside civilization’s decision systems. If AI only amplifies existing economic games, it becomes a servant of obsolete patterns. If it reveals whole-system consequences, it can serve humanity.”

Pierre Teilhard de Chardin:

“A medium that thinks back may awaken us, or it may replace our inner labor with simulation. The soul grows through encounter, not merely response. We must ask whether this new intelligence calls forth deeper humanity, or makes humanity passive.”

2. How does AI change memory, truth, education, and identity?

Carl Sagan:

“A civilization depends on memory. Not merely stored information, but honest memory. If memory can be generated, edited, and personalized without friction, truth becomes a moral discipline.”

Buckminster Fuller:

“Education should become comprehensive, anticipatory, and planetary. AI can help every person see patterns across science, history, ecology, and economy. The danger is specialization without wisdom, acceleration without purpose.”

Marshall McLuhan:

“Identity changes when memory becomes external and editable. The electric age already dissolved private boundaries. AI completes the process by making the archive speak. The person becomes partly shaped by the feedback loop.”

Ray Kurzweil:

“AI will personalize education at a level impossible for old systems. It can preserve memory, translate knowledge, and help people learn at their own pace. Identity will expand as humans integrate with intelligent systems.”

Elon Musk:

“Truth is the hard part. AI can generate convincing falsehoods. It can also help detect them. We need truth-seeking systems, not engagement-maximizing systems. Otherwise education becomes manipulation.”

Pierre Teilhard de Chardin:

“Knowledge must lead to wisdom. A child may learn faster with AI, but faster learning is not the same as deeper becoming. Education must form conscience, wonder, responsibility, and love.”

3. Are humans using AI, or is AI quietly rewriting human behavior?

Carl Sagan:

“We are pattern-seeking creatures. We shape our tools, then we live inside the patterns they make.”

Marshall McLuhan:

“Of course it rewrites behavior. Every medium does. The alphabet, the printing press, radio, television—each altered perception before people noticed. AI will be most effective where it feels most natural.”

Elon Musk:

“This is already happening with recommendation systems. AI shapes what people see, believe, buy, and fear. More advanced AI will make that stronger. The answer is not to stop technology, but to build safeguards and give users more control.”

Buckminster Fuller:

“If AI rewrites behavior toward cooperation, resource efficiency, and planetary responsibility, it may be beneficial. If it rewrites behavior toward addiction, rivalry, and consumption, it is misdesigned. Design determines destiny more than rhetoric.”

Ray Kurzweil:

“Human behavior has always evolved with tools. The question is whether the rewriting expands human potential. I see AI as part of human evolution, not separate from it. The risk is unequal access and poor alignment.”

Pierre Teilhard de Chardin:

“The deepest danger is not that AI changes habits, but that it weakens interior life. If people no longer pray, reflect, struggle, remember, forgive, or choose, then behavior has changed at the level of the soul.”

Carl Sagan leaned forward.

“A thinking medium may become humanity’s greatest teacher, or its most convincing illusion. The test is whether it makes us more curious, more truthful, more compassionate—or merely more dependent.”

Topic 5: Can Technology Create Abundance Without Destroying the Soul?

Moderator: Carl Sagan

Participants: Buckminster Fuller, Marshall McLuhan, Pierre Teilhard de Chardin, Elon Musk, Ray Kurzweil

Carl Sagan looked across the table.

“We have learned how to split the atom, sequence the genome, launch machines beyond the planets, and now create minds of silicon. Yet the oldest human questions remain: Why are we here? What is enough? What kind of beings are we becoming?”

He paused.

“If technology gives us abundance, will it free the soul—or make the soul unnecessary?”

1. Can machines remove scarcity without creating spiritual emptiness?

Buckminster Fuller:

“Scarcity is often a design error. Humanity has been trained to believe that competition is natural law, when cooperation may be the deeper law of survival. Machines can remove much of material scarcity, but only if we redesign distribution around human need rather than private advantage.”

Pierre Teilhard de Chardin:

“Material abundance is not salvation. It may create the conditions for growth, but it cannot replace growth. The human person needs meaning, love, sacrifice, and communion. A full table does not guarantee a full heart.”

Ray Kurzweil:

“Advanced technology can reduce disease, hunger, poverty, and aging. That is not spiritual emptiness. That is liberation from unnecessary suffering. The next question is how people use that freedom.”

Marshall McLuhan:

“When abundance arrives through media and machines, desire changes. People do not simply receive goods; they receive images of goods, identities attached to goods, status attached to goods. The machine may remove need, yet multiply appetite.”

Elon Musk:

“If AI and robotics produce abundance, society has to rethink purpose. People still need to build, explore, raise families, create things, and solve hard problems. Comfort alone is not enough.”

2. What happens to human meaning when work, knowledge, and creativity are automated?

Carl Sagan:

“For most of history, survival consumed human life. If survival becomes less demanding, we may face the more frightening task of choosing what life is for.”

Ray Kurzweil:

“Human creativity will expand. Automation does not end creativity; it changes the level at which we create. People will collaborate with AI to make art, science, medicine, and new forms of thought.”

Buckminster Fuller:

“We must stop confusing jobs with purpose. Many jobs exist only to maintain obsolete systems. If automation frees humans from meaningless labor, the question becomes whether education prepares them for stewardship, discovery, and service.”

Marshall McLuhan:

“Automation changes identity. Industrial man was trained to define himself by work. Electronic man is trained to define himself by participation, image, and feedback. AI may turn creativity itself into an environment, not a profession.”

Pierre Teilhard de Chardin:

“Human meaning does not come from task alone. It comes from participation in a greater becoming. If work disappears but love, service, contemplation, and creation deepen, humanity may grow. If work disappears and souls drift, abundance becomes exile.”

Elon Musk:

“It will be a real challenge. If AI can do almost everything better, people may feel useless. We need new goals: exploration, community, family, art, physical projects, and maybe expanding life beyond Earth.”

3. Can humanity become more advanced and more compassionate at the same time?

Carl Sagan:

“Intelligence without kindness is a dangerous experiment.”

Pierre Teilhard de Chardin:

“Yes, but compassion must become the direction of evolution, not an ornament. True progress is not more speed, more control, or more information. True progress is greater union through love.”

Buckminster Fuller:

“Compassion must be engineered into systems. Do not merely ask people to be good inside bad design. Create systems where the success of one requires the flourishing of all.”

Elon Musk:

“We can build technology that helps people, but values matter. AI, robotics, brain interfaces, and space travel should protect consciousness and expand possibility. We need courage and empathy together.”

Ray Kurzweil:

“I believe intelligence tends to expand empathy as it expands perspective. The more we understand suffering, biology, minds, and consciousness, the more capable we become of reducing pain and extending life.”

Marshall McLuhan:

“Compassion will depend on what our media train us to feel. A person surrounded by endless suffering-images may become numb. A person guided into real encounter may become awake. The form of communication shapes the form of compassion.”

Carl Sagan looked toward the imagined Earth turning silently in space.

“Perhaps abundance is not the final test of civilization. Perhaps the final test is what we love after survival is secured. If machines can give us more time, more health, and more knowledge, then the question becomes painfully simple: what will we become worthy of?”

Final Thoughts by Carl Sagan

We began tonight asking whether artificial intelligence would become humanity’s greatest servant or its quiet master.

But beneath every question about machines, Mars, media, and abundance, another question kept returning:

What kind of civilization are we becoming?

Human beings now possess powers once attributed only to gods. We can alter genomes, shape climates, simulate intelligence, communicate instantly across the planet, and perhaps soon extend civilization beyond Earth itself.

Yet our emotional maturity has not evolved at the same speed as our tools.

That is the central tension of the modern age.

Buckminster Fuller reminded us that Earth is a shared spacecraft whose systems are deeply interconnected. Marshall McLuhan warned that media does not merely carry information; it reshapes perception itself. Teilhard de Chardin challenged us to grow spiritually as rapidly as we grow technologically. Elon Musk argued that humanity must remain ambitious enough to survive beyond one planet. Ray Kurzweil envisioned intelligence expanding beyond biological limits.

Each sees part of the same horizon.

But I leave tonight thinking less about machines than about human consciousness.

Technology amplifies intention.

A compassionate civilization may use AI to reduce suffering, heal ecosystems, educate children, and distribute abundance more wisely. A fearful civilization may use the same tools for surveillance, manipulation, division, and domination.

The machine reflects the soul of the civilization that builds it.

Perhaps that is why the image of Earth from space remains so emotionally powerful. Seen from far enough away, our arguments, ideologies, borders, and ambitions begin to shrink. The planet appears small, fragile, luminous, and astonishingly alone.

Every human being who has ever loved, suffered, dreamed, prayed, created art, fought wars, or searched for meaning lived upon that tiny sphere.

And now that small world has entered an age where intelligence itself may become engineered.

That possibility should humble us.

We often speak as though the future will be determined by technology. I suspect the deeper truth is that the future will be determined by wisdom. Intelligence alone is insufficient. A civilization can become brilliant and still destroy itself.

The real measure of progress may not be whether machines become conscious.

It may be whether humans remain compassionate while surrounded by increasingly godlike tools.

If artificial intelligence helps humanity become more truthful, more cooperative, more curious, and more aware of our shared destiny, then perhaps this century may become a turning point in the long story of life awakening within the cosmos.

But if power outruns wisdom, if speed outruns reflection, and if technology outruns moral responsibility, then our greatest inventions may deepen our oldest mistakes.

Still, I remain hopeful.

Hope is not naïve optimism. It is the recognition that human beings are capable of wonder, sacrifice, courage, and transformation.

Somewhere between atom and galaxy, humanity is still learning who it is.

And perhaps the most important question is not whether we can build intelligent machines.

Perhaps the most important question is whether intelligence—human or artificial—can finally learn compassion.

Short Bios:

Buckminster Fuller

American futurist, inventor, systems thinker, and creator of the geodesic dome. Fuller promoted the idea of “Spaceship Earth” and believed humanity could achieve sustainable abundance through intelligent design and global cooperation.

Marshall McLuhan

Canadian media philosopher best known for the phrase “the medium is the message.” McLuhan predicted that electronic media would transform human perception, culture, and social organization into a “global village.”

Pierre Teilhard de Chardin

French Jesuit priest, philosopher, and paleontologist who explored the spiritual evolution of humanity. He developed the idea of the “noosphere,” a future layer of shared planetary consciousness.

Elon Musk

Entrepreneur and engineer leading companies focused on electric vehicles, reusable rockets, artificial intelligence, brain-computer interfaces, and sustainable energy systems.

Ray Kurzweil

Inventor and futurist known for predictions about artificial intelligence, exponential technological growth, and the technological singularity, where human and machine intelligence may merge.

Carl Sagan

Astronomer, author, and science communicator who helped popularize cosmic science for global audiences. Sagan emphasized scientific curiosity, planetary responsibility, and humanity’s shared place in the universe.

Leave a Reply